Software testing is evolving from scripts to systems.

For over two decades, automation meant writing instructions:

- Click this

- Validate that

- Compare expected vs actual

But AI agents do something fundamentally different.

They don’t just execute rules.

They perceive, reason, decide, and optimize.

According to research from Gartner, autonomous systems will play a central role in enterprise workflows over the next few years. Meanwhile, McKinsey & Company estimates AI-driven automation could significantly improve productivity across software functions.

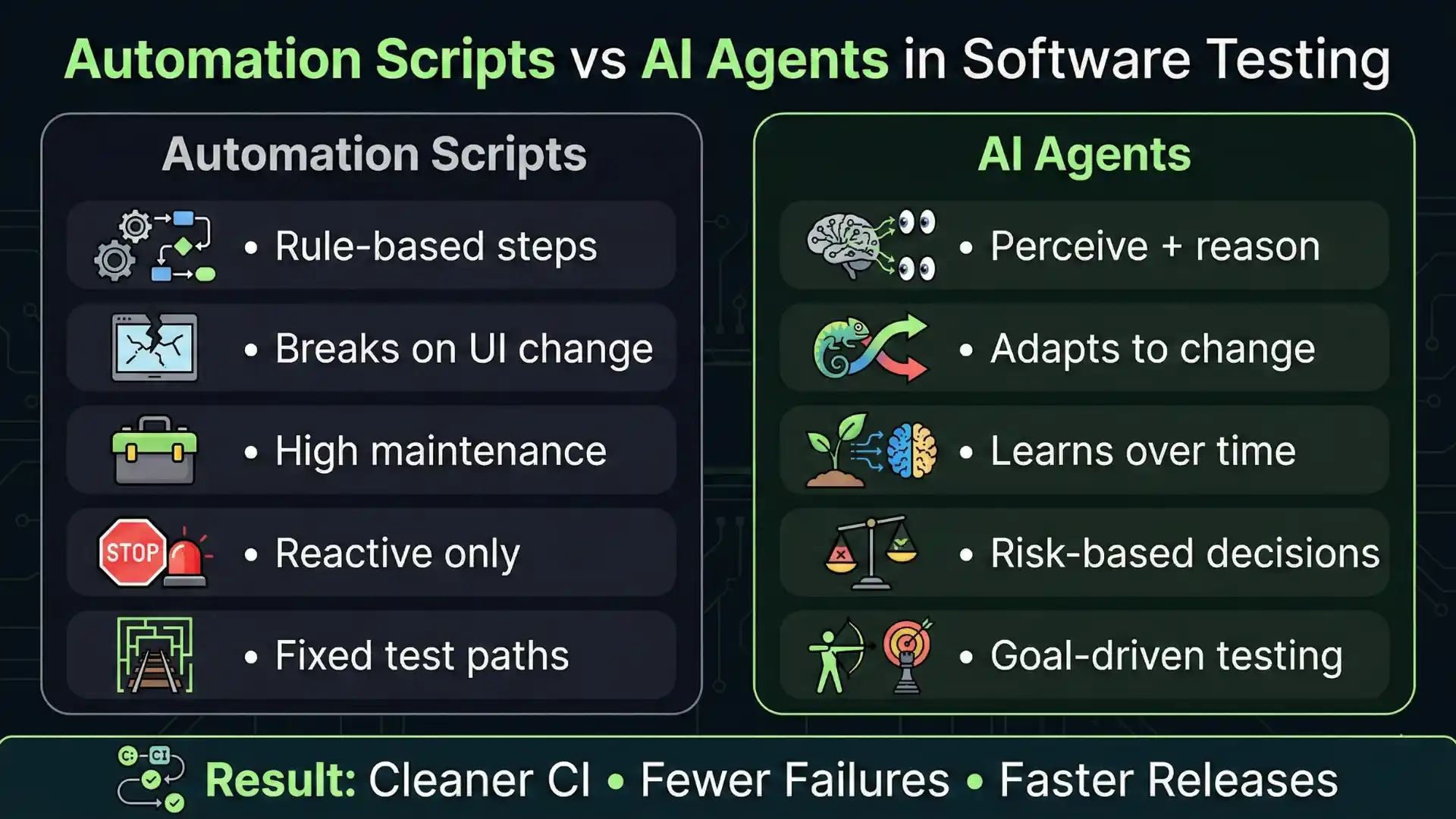

The real shift in QA is not automation vs manual testing.

It is static scripts vs intelligent agents.

AI Agents in Software Testing

AI agents in software testing are intelligent systems that go beyond scripts by perceiving, reasoning, deciding, and improving over time. Unlike traditional automation that follows fixed steps, AI agents can adapt to UI changes, prioritize high-risk scenarios, reduce flaky failures using history, and even coordinate multiple testing tasks (UI, API, logs, and reporting). The most common types are reflex, model-based, goal-based, utility-based, learning, multi-agent, and autonomous agents—together enabling faster, smarter, and more reliable QA.

Key Takeaways:

-

AI agents shift testing from scripts to intelligent systems

-

Learning agents improve test accuracy over time

-

Autonomous agents enable end-to-end testing automation

-

QA roles are evolving toward AI orchestration

To understand this shift, we must understand the 7 types of AI agents — and more importantly, how they apply to software testing.

Recommended for You: playwright interview questions

Beyond Automation: 7 AI Agents Transforming Software Testing

1. Simple Reflex Agents – Rule-Based Automation

Definition:

Acts only on current input using predefined rules.

In software testing, this resembles traditional automation frameworks.

Example:

- If login fails → capture screenshot

- If API status code ≠ 200 → fail test

Most Selenium and Playwright scripts operate at this level.

They react to inputs but lack memory or learning capability.

Limitation:

- No contextual awareness

- No historical learning

- High maintenance when UI changes

This is where traditional automation begins — and often where it gets stuck.

2️. Model-Based Agents – Stateful Intelligence

Model-based agents maintain internal state.

Instead of reacting blindly, they remember past events and understand system behavior over time.

In QA, this enables:

- Flaky test detection using historical failure data

- Tracking recurring element instability

- Context-aware retries in CI/CD pipelines

This is where automation becomes intelligent — not just reactive.

3️. Goal-Based Agents – Objective-Driven Testing

Goal-based agents focus on outcomes rather than predefined steps.

Instead of executing scripted flows, they pursue objectives.

Example:

Goal: Validate checkout flow.

The agent determines:

- Input variations

- Navigation paths

- Edge cases

- Data permutations

This reduces dependency on rigid scripts and increases adaptability.

Goal-based systems are especially powerful in dynamic applications where UI flows frequently change.

4️. Utility-Based Agents – Optimization Engines

Utility-based agents select actions based on maximizing value or minimizing cost.

In enterprise QA environments, this is critical.

Applications in testing include:

- Risk-based test prioritization

- Smart regression suite optimization

- CI runtime reduction

- Cost-aware execution strategies

Instead of running 2,000 tests blindly, a utility-based agent may intelligently run the 400 most impactful ones.

This transforms QA from effort-driven to impact-driven.

5️. Learning Agents – Continuous Improvement Systems

Learning agents improve their performance over time using feedback loops.

They refine strategies based on previous outcomes.

In software testing, this can mean:

- Improving test case generation sprint after sprint

- Enhancing bug prediction models

- Adapting automatically to UI structure changes

- Identifying patterns in defect leakage

Research from Capgemini highlights how AI-driven quality engineering initiatives reduce defect leakage and improve release stability in enterprise settings.

This is where QA becomes adaptive instead of static.

6️. Multi-Agent Systems – Collaborative Testing Intelligence

A single intelligent agent can be powerful.

Multiple coordinated agents can be transformational.

In a multi-agent QA ecosystem:

- One agent generates test scenarios

- Another validates APIs

- Another analyzes logs for anomalies

- Another predicts release risks

Together, they form an orchestrated AI-driven testing pipeline.

This mirrors how modern distributed systems operate — not as monoliths, but as collaborative components.

7️. Autonomous Agents – Agentic QA Systems

At the highest level, autonomous agents:

- Plan

- Execute

- Analyze

- Optimize

- Report

With minimal human intervention.

In software testing, this could look like:

- Self-healing automation frameworks

- AI-generated regression suites

- Autonomous release validation pipelines

- Intelligent defect triage systems

This is not theoretical.

It is the direction in which enterprise QA is moving.

Don’t Miss Out: Claude Code vs GitHub Copilot vs Cursor

The Real Transformation: From Test Execution to AI Orchestration

Traditional QA roles focused on:

- Writing test scripts

- Debugging failures

- Maintaining frameworks

The next generation of QA professionals will focus on:

- Designing AI-driven test architectures

- Training and tuning intelligent agents

- Monitoring AI decision quality

- Governing responsible AI usage in testing

The tester is not being replaced.

The tester is being elevated.

Traditional Automation vs AI Agents in Software Testing

| Aspect | Traditional Automation | AI Agents in Testing |

|---|---|---|

| Approach | Rule-based scripts | Goal-driven intelligence |

| Flexibility | Low (breaks easily) | High (adapts to changes) |

| Maintenance | High effort | Self-improving systems |

| Test Execution | Fixed steps | Dynamic decision-making |

| Handling UI Changes | Manual updates | Self-healing capabilities |

| Test Coverage | Limited by scripts | Expands with learning |

| CI/CD Stability | Flaky failures common | Reduced failures |

| Intelligence Level | Reactive | Predictive & adaptive |

You Might Also Like: Best Generative models in 2026

Why This Matters Beyond 2026

These 7 agent types are not trends.

They are foundational AI architectures.

While tools will evolve, these underlying models will continue to shape intelligent systems — including testing ecosystems — for years to come.

Understanding these agent types allows QA leaders to:

- Evaluate AI tools critically

- Avoid marketing hype

- Build scalable testing architectures

- Make strategic automation investments

Final Thought

Automation executes instructions.

AI agents pursue objectives.

The future of software testing will not be defined by how many scripts you write — but by how intelligently your systems validate quality.

For QA professionals and leaders who want to remain relevant in the AI era, learning AI in software testing is no longer optional. It is a strategic capability.

The era of AI-powered QA has already begun.

FAQs

1. What are AI agents in software testing?

AI agents are intelligent systems that can perceive, analyze, and make decisions to automate and optimize testing processes beyond traditional scripts.

2. How do AI agents improve software testing?

They reduce manual effort, detect patterns, optimize test execution, and enable self-healing automation, improving efficiency and accuracy.

3. What are the types of AI agents in testing?

The 7 types include:

-

Simple reflex agents

-

Model-based agents

-

Goal-based agents

-

Utility-based agents

-

Learning agents

-

Multi-agent systems

-

Autonomous agents

4. Is AI replacing software testers?

No. AI enhances testers by automating repetitive tasks and enabling them to focus on strategy, analysis, and quality engineering.

5. What are AI agents in software testing with examples?

AI agents in software testing are intelligent systems that can analyze, decide, and execute testing tasks. For example, a learning agent can detect flaky tests using past data, while a goal-based agent can validate a checkout flow without predefined steps.

6. What are AI agents in software testing?

AI agents are systems that go beyond automation scripts by making decisions, adapting to changes, and improving over time. They help in smarter test execution, defect prediction, and self-healing automation.

7. AI agent examples

Examples of AI agents include chatbots, recommendation systems, self-driving cars, and in testing—self-healing automation tools, AI test generators, and defect prediction systems.

8. AI agent for test automation

AI agents in test automation help generate test cases, detect UI changes, prioritize test execution, and reduce flaky failures, making automation faster and more reliable.

9. AI agent testing framework

An AI agent testing framework combines automation tools with AI capabilities like learning, decision-making, and optimization. It enables adaptive testing instead of fixed script execution.

1o. Is ChatGPT an AI agent?

ChatGPT is an AI-powered system with conversational abilities, but it becomes an AI agent when integrated with tools, memory, and decision-making capabilities to perform tasks autonomously.

11. How to test AI agents?

Testing AI agents involves validating their accuracy, decision-making, learning behavior, and performance. This includes scenario testing, bias checking, and monitoring outputs over time.

12. Generative AI agents examples

Examples include AI tools that generate test cases, create automation scripts, simulate user behavior, and produce test data using models like GPT or other large language models.

We Also Provide Training In:

- Advanced Selenium Training

- Playwright Training

- Gen AI Training

- AWS Training

- REST API Training

- Full Stack Training

- Appium Training

- DevOps Training

- JMeter Performance Training

Author’s Bio:

Content Writer at Testleaf, specializing in SEO-driven content for test automation, software development, and cybersecurity. I turn complex technical topics into clear, engaging stories that educate, inspire, and drive digital transformation.

Ezhirkadhir Raja

Content Writer – Testleaf