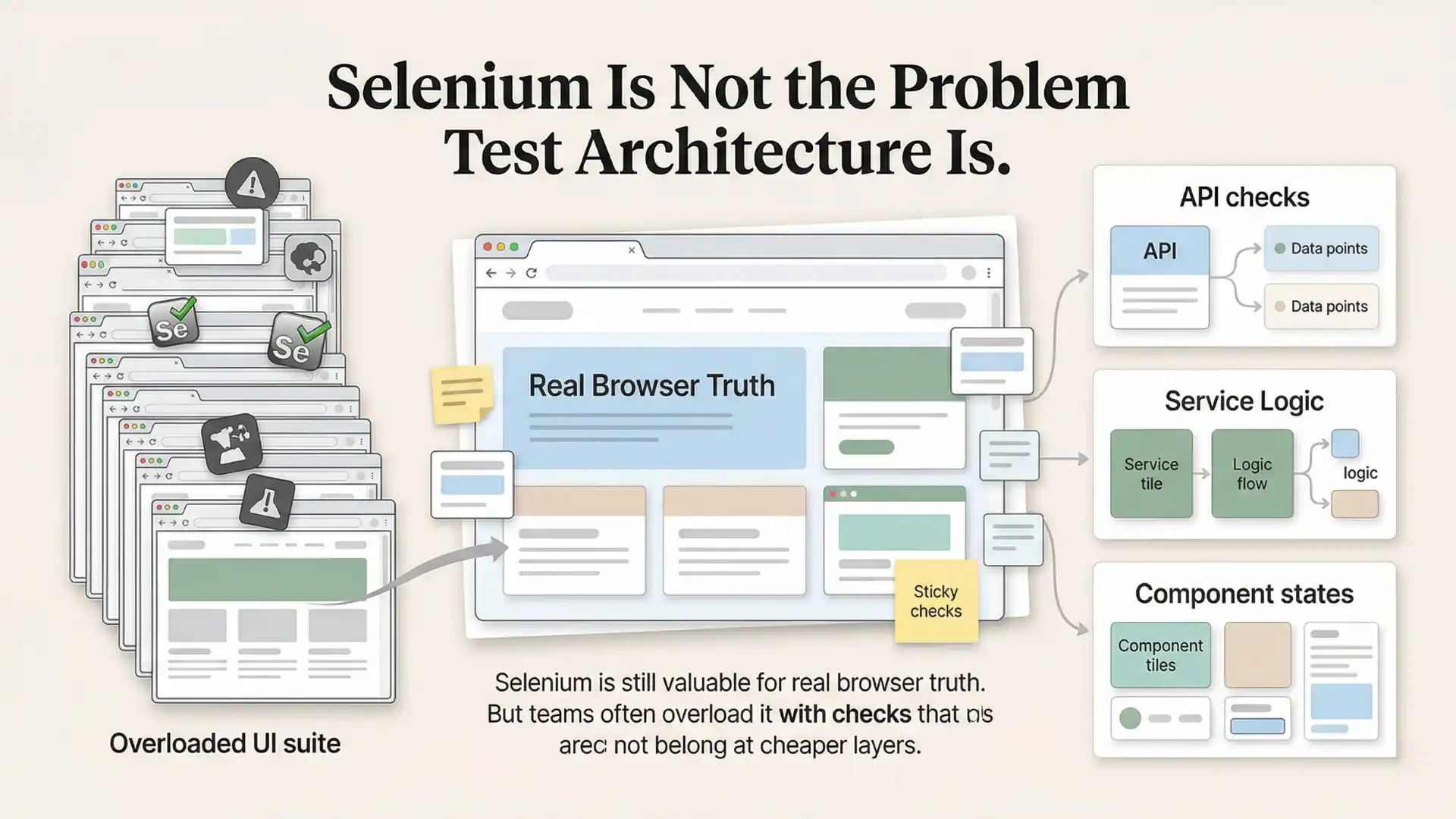

Many QA teams say they want fast feedback, stable pipelines, and confidence at scale. But then they use a full browser journey to verify a single validation message, one pricing badge, or a tiny widget state. That is not a Selenium problem. That is a test architecture problem.

Selenium still matters. Deeply. Its core job is clear: automate the browser the way a user would. Selenium’s own documentation describes WebDriver as driving a browser natively, as a user would, which is exactly why it remains valuable for cross-browser confidence and real user journeys.

But that same strength also explains why Selenium becomes expensive when teams try to use it for everything.

Why do Selenium suites become slow and flaky?

Selenium suites usually become slow and flaky not because Selenium is the wrong tool, but because teams use browser automation for checks that should be tested at API, service, or isolated UI-state layers.

Key takeaways

- Selenium is still the right tool for real browser truth.

- Many UI suites are overloaded with logic-heavy checks.

- Better test architecture means placing checks at the cheapest trustworthy layer.

- APIs often verify business logic faster and more clearly than UI tests.

- Component-friendly environments reduce unnecessary end-to-end drag.

Where the friction really begins

The trouble starts when a browser-first tool is asked to carry the full weight of a modern test strategy.

A team wants to validate a checkout total. Or a dashboard card. Or a disabled state on a button. Instead of testing the business rule at a cheaper layer, the team spins up login, navigation, test data, waits, page synchronization, and full rendering just to confirm one outcome. The test passes sometimes, flakes other times, and slowly becomes harder to trust than the feature it was meant to protect.

This is why the classic test pyramid still matters. Martin Fowler’s practical explanation of the pyramid remains one of the most useful reminders in testing: not all checks should live at the same level, and UI tests should be only one part of a balanced portfolio.

The healthiest Selenium strategy usually uses less Selenium, but in more important places.

Other Helpful Articles: playwright interview questions

Selenium’s real job

Selenium is excellent when the question is: “Does this flow work in a real browser?”

That includes:

- critical user journeys

- cross-browser validation

- rendering plus interaction confidence

- integrations where the whole system matters

That is where browser automation earns its cost.

But Selenium is not a native isolated component-testing system. It was built to automate web browsers, not to mount one UI component in a clean-room environment with mocked dependencies and rapid feedback loops.

So when teams try to force component-level confidence through Selenium alone, they usually invent compensating layers around it.

What smart teams do instead

Mature teams do not abandon Selenium. They reposition it.

They keep Selenium where browser truth matters, and they move other checks downward to layers that are cheaper, faster, and easier to debug.

That shift often happens in three moves.

1. Keep Selenium for browser truth

If a scenario truly depends on routing, rendering, browser compatibility, or a high-value end-to-end flow, Selenium is still the right choice.

The mistake is not using Selenium.

The mistake is using Selenium for checks that never needed the browser in the first place.

2. Move logic-heavy verification to APIs

A surprising amount of UI suite weight comes from business logic wearing a UI costume.

Price calculations. Role permissions. Validation rules. Response mapping. State transitions. These are often asserted through the interface simply because that is where the team started, not because the browser is the best place to prove them.

When those checks move to API or service layers, teams usually gain three things immediately: faster execution, clearer failures, and less maintenance noise. That is exactly the kind of architectural rethink modern QE teams are under pressure to make. Capgemini’s World Quality Report 2025–26 frames QE as one of the enterprise functions with the strongest transformative potential, while the 2024 report found 57% of organizations still struggle with a lack of comprehensive test automation strategies.

Not every check deserves a browser.

3. Isolate reusable UI through component-friendly hosts

When teams need confidence in component behavior, many create local sandbox pages, Storybook-based environments, or thin host apps to exercise states without dragging the entire application along for the ride.

That pattern exists for a reason.

Storybook explicitly positions itself as a way to build, test, and document components in isolation, and its testing docs describe a clean-room environment where teams can explore component variations, mock dependencies, and cover UI behavior more efficiently.

This is the practical workaround many Selenium-heavy teams discover over time: isolate UI states outside full application flows, then reserve Selenium for the journeys that genuinely need end-to-end browser confidence.

The real cost of getting this wrong

Overloaded teams often measure automation progress by the number of browser tests they have.

Mature teams measure confidence per unit of maintenance.

That difference changes everything.

An overloaded team:

- pushes every meaningful check through the UI

- keeps adding waits, retries, and patches

- accepts flakiness as “normal”

A mature team:

- asks which layer can prove this fastest

- uses the browser only when browser fidelity matters

- reduces suite weight before adding more tooling

That mindset matters more than the tool choice itself.

In fact, modern component-testing advocates make a similar argument. Storybook’s component-testing guidance says component tests hit a sweet spot: browser fidelity with better speed and reliability than broad end-to-end suites, while still complementing rather than replacing unit and E2E tests.

That is the key point many teams miss.

The goal is not more automation. The goal is better-placed automation.

Further Reading: Top 10 products based companies in chennai

A practical way to rethink a Selenium-heavy suite

If your Selenium suite feels slow, brittle, or expensive, the answer is not automatically a new tool. Start with a classification exercise.

Take your existing UI tests and sort them into three buckets:

Browser-critical

Needs real browser behavior, routing, rendering, session flow, or cross-browser confidence.

Logic-heavy

Mostly validating business rules, calculations, permissions, transformations, or state logic.

Reusable UI-state checks

Testing specific component states, visual conditions, or controlled interactions that do not need a full journey.

Then act accordingly.

Keep the first bucket in Selenium. Move the second toward APIs or service-level checks. Isolate the third through component-friendly environments such as Storybook or internal sandbox hosts.

This is not a downgrade in quality. It is an upgrade in test economics.

Why this matters now

The pressure on QA teams is changing. According to the World Quality Report 2024, 68% of organizations were either actively using GenAI in QE or had roadmaps after successful pilots, and 72% reported faster automation processes where GenAI was applied. But the same report also shows that adoption alone does not fix weak strategy.

AI can accelerate authoring, analysis, and maintenance.

It cannot rescue a badly placed test suite.

If everything is end-to-end, nothing is optimized.

That is why this conversation is bigger than Selenium. It is about maturity. Teams that scale well eventually learn that quality is not just about automation volume. It is about putting each check at the layer where it is cheapest to run, easiest to understand, and most trustworthy when it fails.

Slow Selenium suites are often a test architecture problem, not a Selenium problem. Mature teams keep browser-critical checks in Selenium and move logic-heavy or reusable UI-state checks to cheaper layers.

Final thought

Selenium is not outdated. Selenium is often overloaded.

Used well, it remains one of the most important tools in browser automation. Used poorly, it becomes a carrier for tests that belong elsewhere.

The future of test strategy is not forcing one tool to do everything. It is building a balanced system where browser tests prove browser truth, APIs prove business behavior, and component-level environments prove UI states without unnecessary drag.

That is the shift mature QA teams make.

And once they make it, Selenium stops feeling heavy again.

If your team or career path still depends on building reliable browser automation, strengthening your foundation matters. A practical Selenium training in chennai can help professionals go beyond basic script creation and learn how to design stable frameworks, improve locator strategy, reduce flakiness, and build smarter test architecture for real-world enterprise projects. That kind of hands-on learning is what turns Selenium knowledge into long-term automation confidence.

FAQs

Is Selenium the problem in slow UI suites?

Not always. In many cases, the bigger issue is test architecture, especially when browser automation is used for checks that do not truly need the browser.

What should Selenium be used for?

Selenium is best used for browser-critical checks such as real user journeys, cross-browser validation, rendering plus interaction confidence, and integrations where the full system matters.

What checks should move out of Selenium?

Logic-heavy checks like calculations, permissions, validation rules, response mapping, and state transitions often fit better at API or service layers. Reusable UI-state checks often fit better in isolated component-friendly hosts.

Why does the test pyramid still matter?

Because not every check belongs at the UI layer. A balanced test portfolio reduces maintenance cost, improves speed, and makes failures easier to understand.

How should teams rethink a Selenium-heavy suite?

Teams should classify tests into browser-critical, logic-heavy, and reusable UI-state checks, then keep only the browser-critical group in Selenium and move the others to better layers.

We Also Provide Training In:

- Advanced Selenium Training

- Playwright Training

- Gen AI Training

- AWS Training

- REST API Training

- Full Stack Training

- Appium Training

- DevOps Training

- JMeter Performance Training

Author’s Bio:

Content Writer at Testleaf, specializing in SEO-driven content for test automation, software development, and cybersecurity. I turn complex technical topics into clear, engaging stories that educate, inspire, and drive digital transformation.

Ezhirkadhir Raja

Content Writer – Testleaf