This blog is a continuation of our previous articles — “AI Won’t Replace Testers — But It Will Replace How They Work” and “How Manual Testers Can Transition to AI-Driven QA in 6 Months.”

In those articles, we explored:

- How AI is reshaping the role of testers

- How manual testers can transition into AI-driven QA

Now let’s address the next critical question:

If AI can generate, automate, and optimize testing — why do we still need testers?

Why does AI testing need QA engineers?

AI testing needs QA engineers because AI systems can generate outputs but cannot guarantee correctness, context, or reliability without human validation.

In This Guide You’ll Learn:

- Why AI cannot replace testers

- Risks in AI-generated outputs

- The evolving role of QA engineers

- How testing AI differs from traditional testing

The Biggest Misconception About AI in Testing

There’s a growing belief:

“AI can test everything automatically.”

On the surface, it feels true.

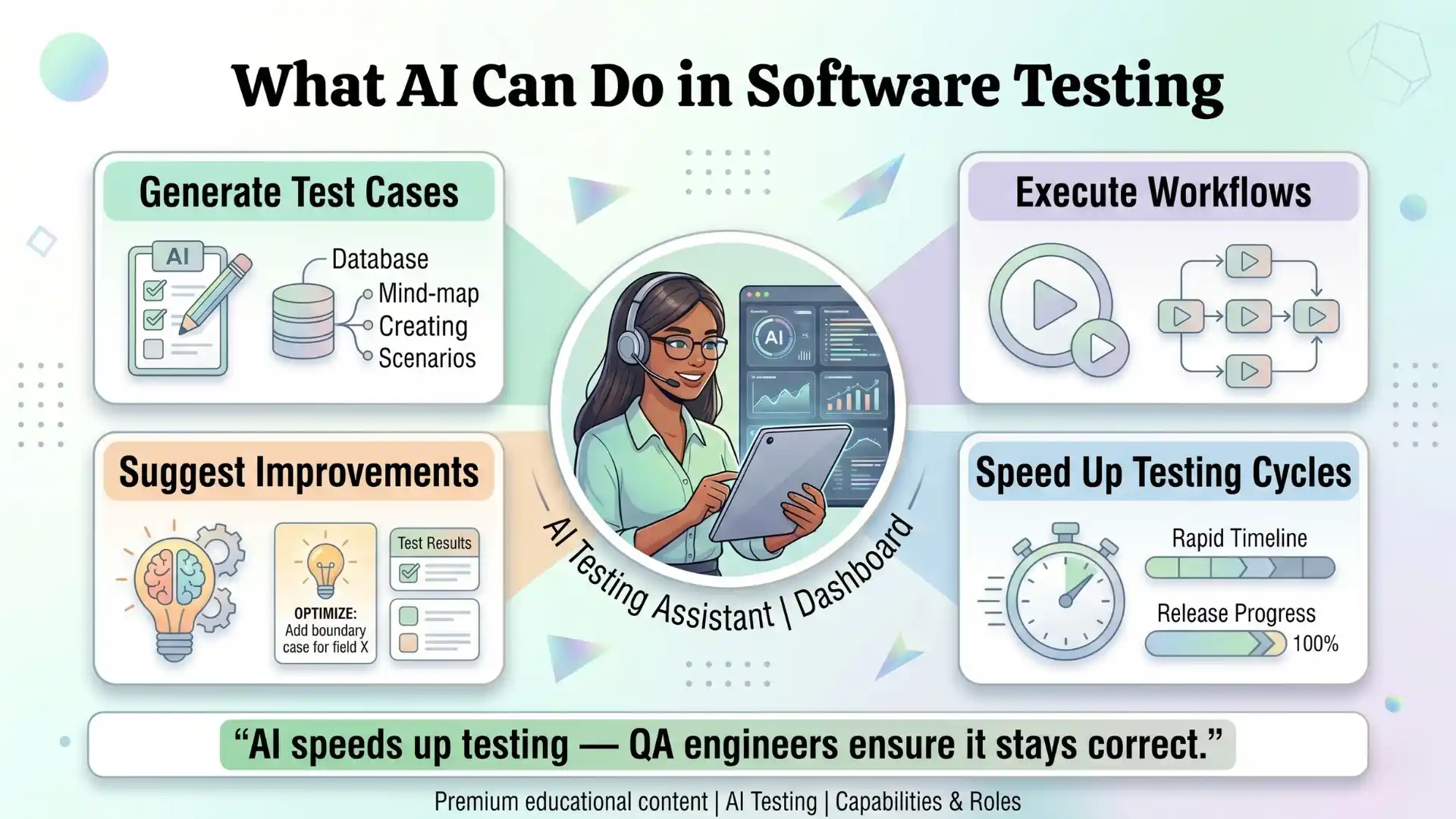

AI can:

- Generate test cases

- Execute workflows

- Suggest improvements

- Speed up testing cycles

But the reality is:

AI can produce outputs. It cannot guarantee correctness.

And that is where QA engineers become more important.

Can AI really test everything automatically?

No. AI can generate outputs, but it cannot guarantee correctness or context.

Additional Resources: playwright interview questions

AI Systems Are Not Reliable by Default

In a recent conversation with Babu Manickam — CEO & Co-Founder of QEagle and Testleaf, with over 25+ years of experience in software testing and quality engineering, mentoring thousands of QA professionals into modern testing roles — one critical point stood out:

AI systems are powerful, but they are not trustworthy without validation.

1. AI Can Hallucinate — And It Looks Convincing

AI predicts outputs based on patterns.

That means:

- It can generate incorrect test cases

- It can produce invalid data

- It can give confident but wrong results

The risk is that incorrect outputs often appear correct.

Without validation, these errors can go unnoticed.

Can AI-generated test cases be trusted directly?

No. They must always be validated by QA engineers.

2. AI Lacks Business Context

AI can process patterns but does not fully understand:

- Business priorities

- Real user behavior

- Domain-specific edge cases

A test case may look technically correct but may not reflect real-world usage.

This is where human understanding becomes essential.

3. AI Can Introduce Hidden Risks

AI systems can:

- Expose sensitive data

- Misinterpret inputs

- Generate inconsistent outputs

In domains like banking or healthcare, these risks are critical.

Without proper validation, issues can scale quickly.

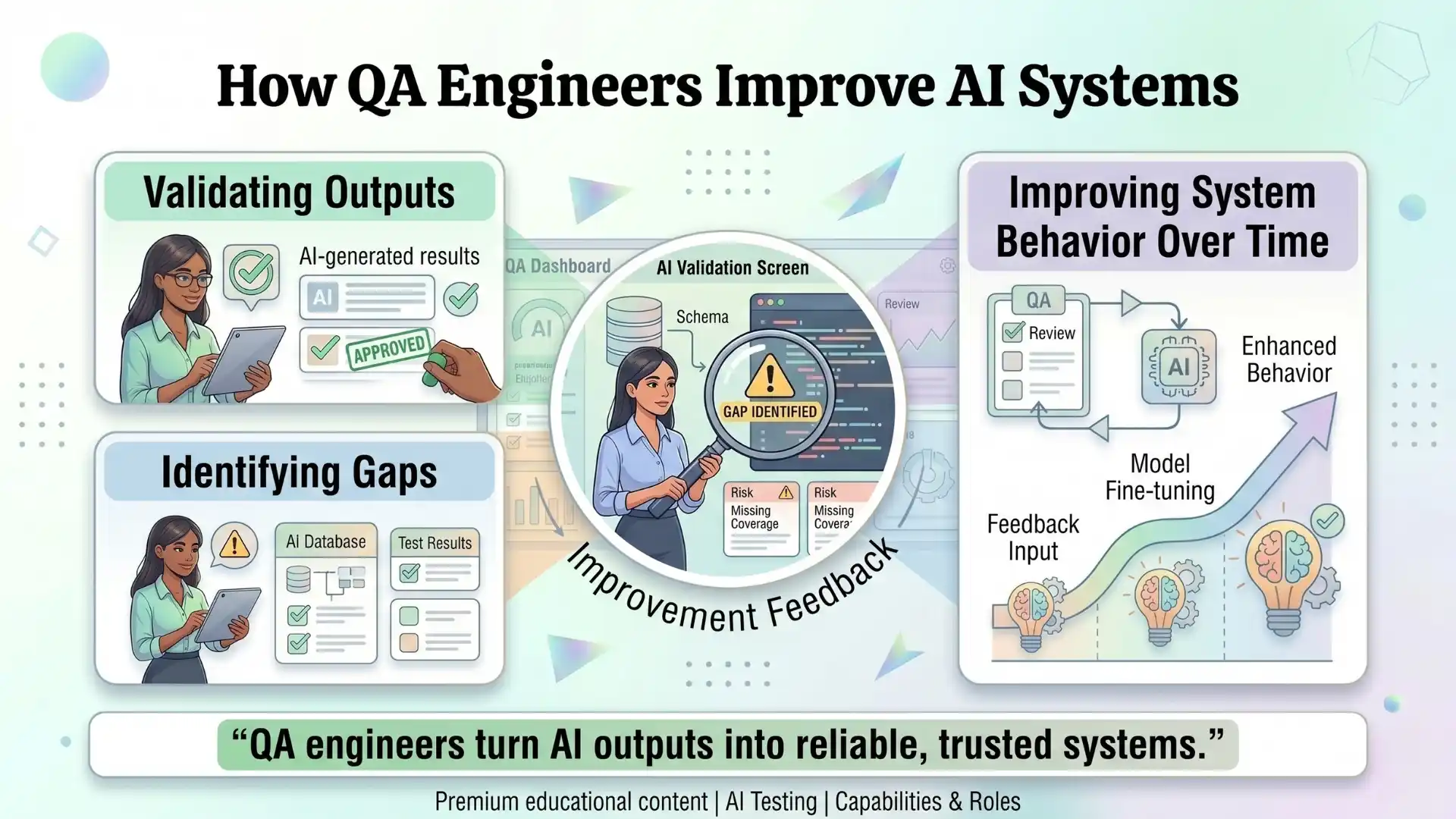

4. AI Needs Continuous Feedback to Improve

AI systems improve through:

- Feedback

- Corrections

- Iterations

QA engineers play a key role in:

- Validating outputs

- Identifying gaps

- Improving system behavior over time

Without this feedback loop, systems do not improve effectively.

If AI is doing more work, what do testers do now?

They validate, analyze risk, and ensure system reliability

Other Useful Guides: CTS java interview questions

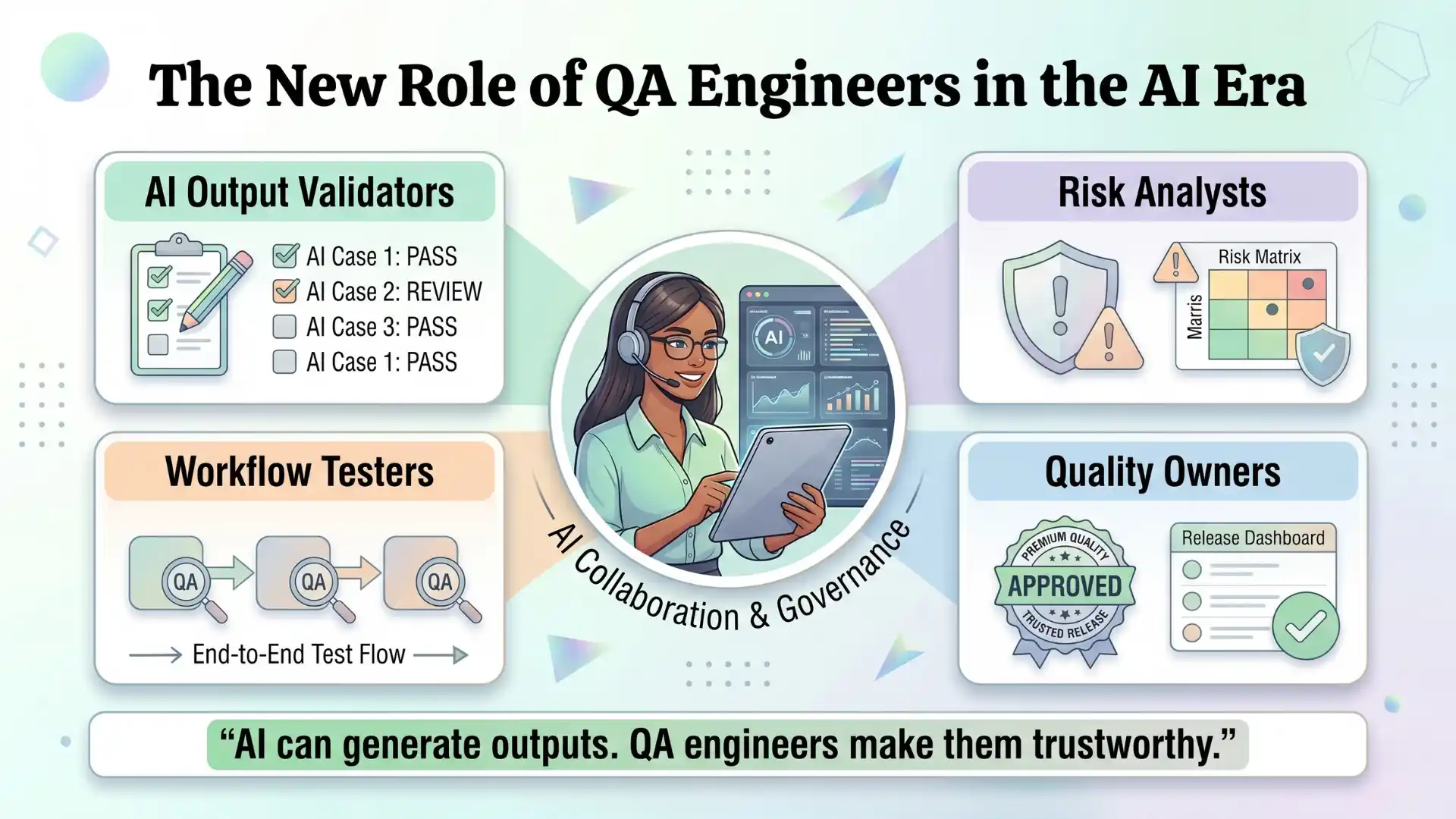

The New Role of QA Engineers in the AI Era

Testing is not disappearing.

It is evolving.

QA engineers are becoming:

AI Output Validators

- Verify correctness of generated results

- Identify inconsistencies

Risk Analysts

- Evaluate where AI systems may fail

- Identify edge cases

Workflow Testers

- Validate AI-driven processes

- Ensure integrations function correctly

Quality Owners

- Maintain confidence in system outputs

- Ensure standards are met before release

Testing AI Is Different from Testing Traditional Software

Traditional testing focuses on:

- Deterministic outputs

- Fixed logic

- Predictable behavior

AI testing involves:

- Variable outputs

- Probabilistic responses

- Model-driven behavior

This requires a shift in approach.

Instead of only verifying correctness, testing also involves evaluating:

- Accuracy

- Consistency

- Reliability

- Risk

Why Testers Have an Advantage in the AI Era

Testers already:

- Think in edge cases

- Understand failure scenarios

- Focus on quality and risk

These skills directly apply to AI system validation.

Testers are well positioned to adapt to AI-driven testing environments.

You Should Also Read: AI and ML engineer salary in india

The Opportunity Ahead

The question is not whether AI will replace testers.

The real question is how testers will adapt to working with AI systems.

As AI adoption increases, the need for:

- Validation

- Risk assessment

- Quality assurance

also increases.

Will AI replace QA engineers in the future?

No. It will increase the need for strong QA practices.

Key Takeaways

- AI produces outputs, QA ensures correctness

- Human context is critical in testing AI

- AI introduces new risks, not fewer

- QA engineers are essential for trust

Final Thought

AI does not reduce the need for testing.

It increases the need for more reliable and thoughtful testing practices.

Because:

The more systems rely on AI, the more important it becomes to validate their behavior.

And that responsibility continues to belong to QA engineers.

AI won’t replace testers.

It will make strong testing practices more valuable and outdated practices less relevant.

FAQs

Why does AI testing need QA engineers?

AI testing needs QA engineers because AI can generate outputs, but humans are needed to validate correctness, identify risks, and ensure reliable software quality.

Can AI replace QA engineers in software testing?

No. AI can support testing tasks, but it cannot replace human judgment, business context, risk analysis, and quality ownership.

What role do QA engineers play in AI testing?

QA engineers validate AI outputs, identify gaps, test workflows, analyze risks, and improve system behavior over time through feedback and validation.

Why are AI-generated test cases not always reliable?

AI-generated test cases may contain incorrect logic, missing scenarios, hallucinated outputs, or weak business context, so they must be reviewed by QA engineers.

How is AI testing different from traditional software testing?

Traditional testing checks predictable outputs, while AI testing evaluates variable outputs, accuracy, consistency, reliability, and risk.

What are the biggest risks in AI testing?

The biggest risks in AI testing include hallucinated outputs, lack of business context, inconsistent results, hidden bias, and poor validation of AI-generated decisions.

How do QA engineers improve AI systems over time?

QA engineers improve AI systems by validating outputs, finding gaps, giving feedback, refining workflows, and ensuring the system becomes more reliable with each iteration.

Why is human judgment important in AI testing?

Human judgment is important because QA engineers understand user behavior, business rules, risk areas, and real-world scenarios that AI may miss.

What skills do QA engineers need for AI testing?

QA engineers need testing fundamentals, automation knowledge, prompt engineering basics, risk analysis, data awareness, and the ability to validate AI-generated outputs.

Is AI in software testing useful for manual testers?

Yes. AI in software testing helps manual testers generate test ideas, improve coverage, analyze risks, and move toward AI-driven QA roles.

We Also Provide Training In:

- Advanced Selenium Training

- Playwright Training

- Gen AI Training

- AWS Training

- REST API Training

- Full Stack Training

- Appium Training

- DevOps Training

- JMeter Performance Training

Author’s Bio:

Content Writer at Testleaf, specializing in SEO-driven content for test automation, software development, and cybersecurity. I turn complex technical topics into clear, engaging stories that educate, inspire, and drive digital transformation.

Ezhirkadhir Raja

Content Writer – Testleaf