AI is no longer only for data science teams.

In 2026, AI is entering test design, automation, defect analysis, test data, release decisions, and production monitoring. This means QA teams cannot look at AI as just a chatbot or prompt tool anymore.

They need to understand the platform behind AI.

A machine learning platform is the system that helps teams build, train, deploy, monitor, and manage AI models. For QA teams, this matters because the future of testing is not only about writing scripts. It is about using AI safely, correctly, and at scale.

This is where AI in software testing, AI for software testing, and AI in testing become practical.

Many companies are already using AI. McKinsey’s 2025 State of AI report says AI use in at least one business function has grown sharply, and many organizations are also experimenting with AI agents.

But here is the real question:

Are QA teams ready to test, trust, and control these AI systems?

What Is a Machine Learning Platform?

A machine learning platform is a software environment that helps teams manage the full AI lifecycle. This includes data preparation, model training, model deployment, monitoring, retraining, and governance.

For QA teams, a machine learning platform can support:

| QA Need | How ML Platforms Help |

|---|---|

| Test data analysis | Find patterns in large data sets |

| Defect prediction | Identify risky areas before release |

| Test case generation | Create test ideas from requirements |

| Automation maintenance | Detect repeated flows and unstable scripts |

| Failure analysis | Group similar failures and find root causes |

| AI agent workflows | Connect models with tools, memory, and actions |

In simple terms, a machine learning platform helps teams move from manual AI experiments to controlled AI execution.

Check Out These Articles: playwright interview questions

Why QA Teams Should Care About Machine Learning Platforms

Most testers think machine learning platforms are only for data scientists.

That is no longer true.

Modern QA teams are now expected to test AI features, validate AI outputs, review model behavior, and use AI tools inside their own testing workflow.

This shift creates a new skill gap.

A QA engineer may not need to become a full data scientist. But they should understand how AI systems are built, deployed, monitored, and improved.

That is why machine learning platforms matter.

They help QA teams understand:

- Where the model gets its data

- How the model was trained

- How predictions are made

- How output quality is checked

- How drift or wrong behavior is detected

- How AI agents are governed

Without this knowledge, QA teams may only test the UI and miss the real risk inside the AI layer.

The Big Shift: From Test Automation to AI-Driven QA

Traditional automation checks whether the application works as expected.

AI-driven QA goes one step further.

It helps teams decide what to test, when to test, where risk is high, and why failures happen.

This is the real value of AI for software testing.

For example, AI can help QA teams:

- Read user stories and suggest test cases

- Review old defects and predict risky modules

- Analyze logs and group similar failures

- Detect flaky test patterns

- Suggest better locators or reusable helpers

- Create synthetic test data

- Prioritize tests based on code changes

But these workflows need more than a simple AI prompt.

They need data, tools, access control, monitoring, and feedback loops. That is what machine learning platforms provide.

See Also: Automation engineer interview questions

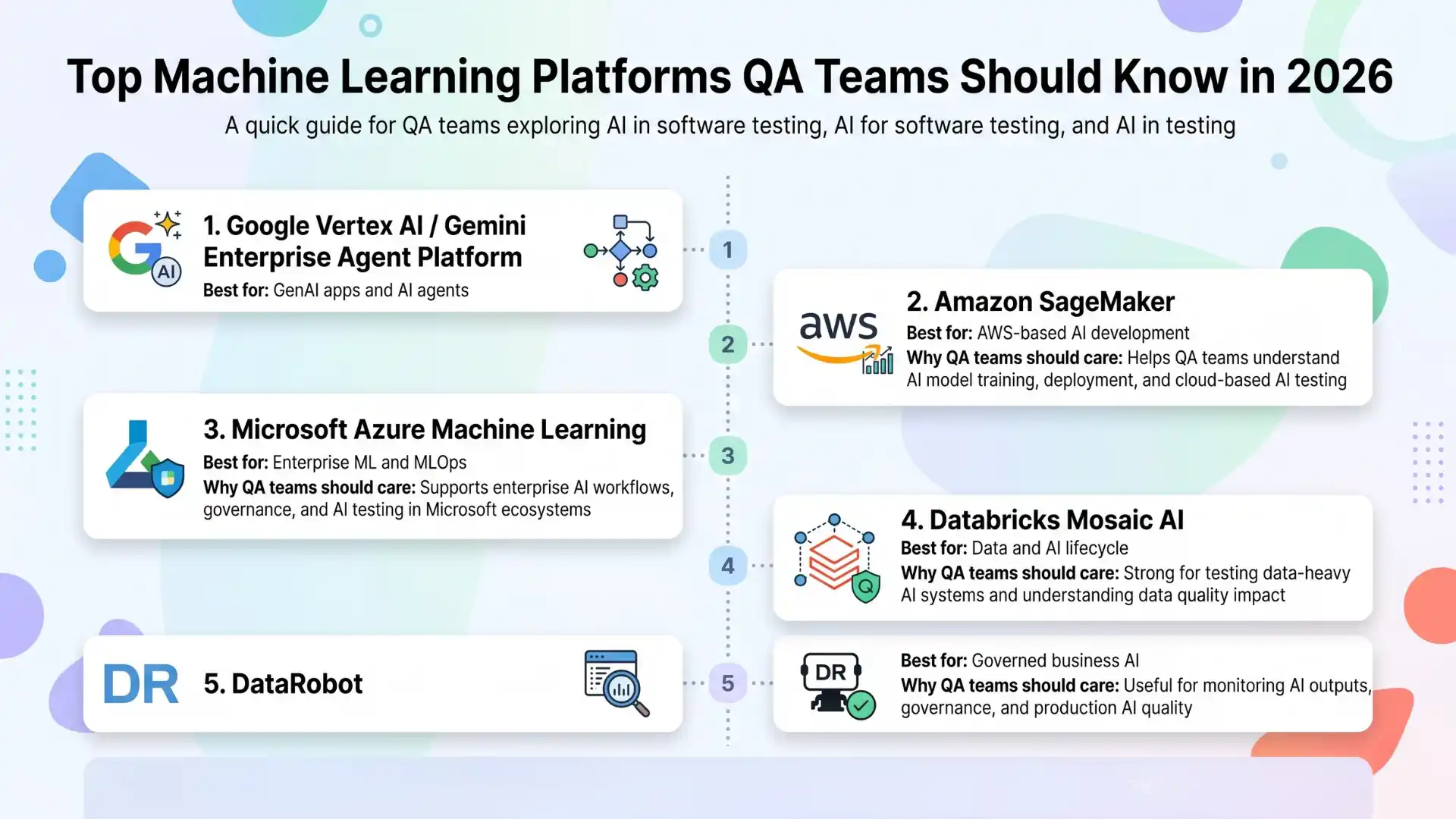

Top Machine Learning Platforms QA Teams Should Know in 2026

This is not just a “top 10 tools” list. QA teams should learn platforms based on how they support real testing and AI adoption.

1. Google Vertex AI / Gemini Enterprise Agent Platform

Google Vertex AI is a unified platform for building, deploying, and scaling generative AI and machine learning models. It supports model access, AI applications, MLOps, and GenAI workflows.

Google also positions Gemini Enterprise Agent Platform as a platform for building, scaling, governing, and optimizing enterprise-ready agents.

Why QA Teams Should Care

This platform is useful for teams exploring AI agents, test generation, defect analysis, and model-based workflows.

QA teams can use this knowledge to understand:

- How AI models are deployed

- How GenAI apps are managed

- How agents connect to enterprise systems

- How model behavior can be monitored

Best For

Google Cloud teams, GenAI testing teams, and QA teams working with AI agent workflows.

2. Amazon SageMaker

Amazon SageMaker helps teams build, train, and deploy machine learning models. AWS also offers SageMaker Unified Studio, which brings data, analytics, model development, generative AI app development, and governance into one environment.

Why QA Teams Should Care

Many enterprises already use AWS. So QA teams may need to test AI features built on SageMaker, Bedrock, or other AWS services.

This helps testers understand:

- Model training workflows

- AI deployment pipelines

- Data access rules

- Model monitoring

- AI application testing

Best For

AWS-heavy enterprises, cloud QA teams, and testers working with AI products hosted on AWS.

3. Microsoft Azure Machine Learning

Azure Machine Learning helps teams train, deploy, and manage machine learning models. Microsoft also highlights MLOps practices for managing the machine learning lifecycle.

Why QA Teams Should Care

Many enterprises use Microsoft tools. Azure ML fits well for teams already using Azure DevOps, Microsoft cloud services, and enterprise security models.

QA teams can learn how AI models move from experiment to production.

Best For

Enterprise QA teams, Microsoft ecosystem users, and teams working with regulated business applications.

4. Databricks Mosaic AI

Databricks helps teams build, deploy, and manage AI and machine learning applications across the AI lifecycle, from data preparation to production monitoring.

It also supports MLOps workflows for improving the long-term efficiency of ML systems.

Why QA Teams Should Care

Databricks is strong when AI depends on large data sets.

For QA teams, this matters because AI quality depends on data quality. If the data is wrong, the AI output will also be wrong.

Best For

Data-heavy QA teams, analytics-driven organizations, and teams testing AI systems that depend on large data pipelines.

5. DataRobot

DataRobot focuses on developing, delivering, and governing AI solutions. Its platform includes predictive AI, generative AI, governance, and observability capabilities.

Why QA Teams Should Care

DataRobot is useful for teams that want faster AI adoption with governance and business use cases.

QA teams can learn how AI models are tested, monitored, and managed after deployment.

Best For

Business teams, enterprise AI teams, and QA leaders who want governed AI workflows.

Machine Learning Platform Comparison for QA Teams

| Platform | Best Use Case | QA Relevance |

|---|---|---|

| Google Vertex AI / Gemini Enterprise Agent Platform | GenAI and AI agents | Testing AI agents, model behavior, and GenAI apps |

| Amazon SageMaker | AWS-based AI development | Testing cloud AI pipelines and AI apps |

| Azure Machine Learning | Enterprise ML and MLOps | Testing AI in Microsoft-based systems |

| Databricks Mosaic AI | Data and AI lifecycle | Testing data-heavy AI systems |

| DataRobot | Governed business AI | Testing AI outputs, risks, and model monitoring |

What QA Teams Should Learn Before Using AI in Testing

QA teams do not need to master every algorithm first.

They need practical understanding.

Start with these areas:

1. Data Quality

AI is only as good as the data behind it.

QA teams should learn how to check missing data, wrong labels, duplicate records, biased data, and outdated data.

2. Model Output Validation

Testing AI is not like checking a fixed button click.

AI output can change.

So testers must check accuracy, consistency, relevance, safety, and business impact.

3. MLOps Basics

MLOps means managing machine learning models from development to deployment and monitoring. Google explains MLOps as a practice that helps deploy and maintain ML models reliably and efficiently.

For QA teams, this means understanding how models are released, monitored, and retrained.

4. AI Agent Testing

AI agents can plan, decide, and take actions.

This creates new testing needs:

- Did the agent understand the goal?

- Did it use the right tool?

- Did it follow guardrails?

- Did it expose private data?

- Did it take the right action?

- Can the result be traced?

This is a major reason QA teams must understand machine learning platforms in 2026.

How Machine Learning Platforms Change Software Testing

Machine learning platforms can change testing in five major ways.

1. Smarter Test Case Creation

AI can read requirements, user stories, and acceptance criteria. Then it can suggest test cases.

This saves time, but QA review is still needed.

2. Better Defect Analysis

AI can group similar bugs, read logs, and identify repeated failure patterns.

This helps QA teams move faster during regression cycles.

3. Risk-Based Test Prioritization

Instead of running every test blindly, AI can suggest which tests are more important based on code changes, defect history, and production usage.

4. Flaky Test Detection

AI can study automation reports and find patterns behind flaky tests.

This is useful for Selenium, Playwright, Appium, and API automation teams.

5. AI Agent-Based QA Workflows

In the future, QA agents may create test cases, run automation, analyze failures, raise defects, and recommend fixes.

But this should not happen without control.

That control comes from the platform layer.

Common Mistakes QA Teams Should Avoid

Many teams rush into AI without a clear plan.

Avoid these mistakes:

| Mistake | Why It Is Risky |

|---|---|

| Using AI without data checks | Wrong data leads to wrong output |

| Trusting AI output blindly | AI can sound confident and still be wrong |

| Ignoring MLOps | Models can fail after deployment |

| Testing only the UI | AI risk may be inside the model or data layer |

| No human review | QA judgment is still needed |

| No governance | AI can create security and compliance risks |

The goal is not to replace testers.

The goal is to make testers stronger.

Further Exploration: Highest paying companies in india

The Future QA Skill: Platform Thinking

In the past, QA engineers learned tools.

Then they learned automation frameworks.

Now they must learn platforms.

This does not mean every tester must become an AI engineer.

But every future-ready QA professional should understand how AI systems work from end to end.

That includes:

- Data

- Models

- Prompts

- APIs

- Tools

- Agents

- Monitoring

- Governance

- Human review

This is the future of AI in testing.

QA teams that understand this will not just test screens. They will test intelligence.

Conclusion

Machine learning platforms are becoming a key part of modern software delivery.

For QA teams, this is a big shift.

Testing is no longer only about checking whether a feature works. It is also about checking whether AI systems are accurate, safe, stable, explainable, and useful.

In 2026, the strongest QA professionals will not be the ones who only know manual testing or automation scripts.

They will be the ones who understand how AI works inside real software systems.

That is why machine learning platforms matter.

They are the bridge between software testing, automation, data, models, MLOps, and AI agents.

QA teams that learn this now will be better prepared for the future of software testing.

Join Our GenAI Webinar

Want to learn how AI agents can change the way QA teams work?

Join our GenAI webinar:

AI Master Class for QA Professionals – Master AI Agents

Learn how QA professionals can use GenAI, prompts, automation, and AI agents to stay ready for the next phase of software testing.

Attend the webinar and start building future-ready QA skills with Testleaf.

FAQs

What is a machine learning platform?

A machine learning platform is a software system that helps teams build, train, deploy, monitor, and manage AI models. It supports the full AI lifecycle from data to production.

Why should QA teams learn machine learning platforms?

QA teams should learn machine learning platforms because AI is becoming part of software testing, automation, defect analysis, test data, and release decisions. Testers need to understand how AI systems are built and controlled.

How is AI used in software testing?

AI in software testing can help with test case generation, defect prediction, failure analysis, flaky test detection, test data creation, and risk-based test prioritization.

Is AI for software testing replacing testers?

No. AI for software testing is not replacing testers. It is changing the tester’s role. Testers will need stronger skills in AI validation, automation, data quality, and critical thinking.

What is the difference between MLOps and test automation?

Test automation runs checks on software. MLOps manages machine learning models after they are built. It includes deployment, monitoring, retraining, and governance. QA teams need both when testing AI-based systems.

Which machine learning platform is best for QA teams?

There is no single best platform for every QA team. AWS teams may prefer SageMaker. Google Cloud teams may use Vertex AI. Microsoft-based enterprises may use Azure Machine Learning. Data-heavy teams may use Databricks.

We Also Provide Training In:

- Advanced Selenium Training

- Playwright Training

- Gen AI Training

- AWS Training

- REST API Training

- Full Stack Training

- Appium Training

- DevOps Training

- JMeter Performance Training

Author’s Bio:

Content Writer at Testleaf, specializing in SEO-driven content for test automation, software development, and cybersecurity. I turn complex technical topics into clear, engaging stories that educate, inspire, and drive digital transformation.

Ezhirkadhir Raja

Content Writer – Testleaf