Building AI Is Easy. Trusting It Is Not.

Anyone can build an AI model today.

With APIs, tools, and frameworks, you can generate outputs in seconds. You can create chatbots, automate workflows, and even generate test cases using AI in software testing.

But here’s the real problem:

👉 Can you trust what the AI produces?

Because in real-world systems, AI does not guarantee correctness.

It only predicts probabilities.

And that changes everything.

Who makes AI systems trustworthy?

AI engineers build AI systems, but QA engineers ensure trust by validating outputs, managing risks, and performing AI system validation in real-world scenarios.

What AI Engineers Actually Do

AI engineers focus on building intelligent systems.

They:

- Train models

- Fine-tune outputs

- Improve accuracy

- Integrate AI into applications

Their goal is simple:

👉 Make AI systems work

But working does not mean reliable.

Why AI Systems Are Not Reliable by Default

AI behaves differently from traditional software.

In traditional systems:

- Same input → Same output ✔

In AI systems:

- Same input → Different output ❌

This is one of the biggest challenges in AI testing.

AI systems:

- Learn from imperfect data

- Produce probabilistic results

- Can hallucinate confidently

👉 That means incorrect outputs can look correct

This is where trust breaks.

The Missing Layer: AI System Validation

Building AI is only half the job.

The real challenge is:

👉 Testing AI systems in real-world conditions

This is where AI in software testing becomes critical.

AI system validation includes:

- Checking output correctness

- Measuring consistency

- Identifying edge cases

- Evaluating risk

👉 Without this, AI systems remain unpredictable

Where QA Engineers Come In

QA engineers solve the trust problem.

They focus on:

- Validating AI-generated outputs

- Identifying incorrect or risky behavior

- Testing edge cases

- Ensuring real-world reliability

👉 Their goal is not just testing

👉 It is building trust

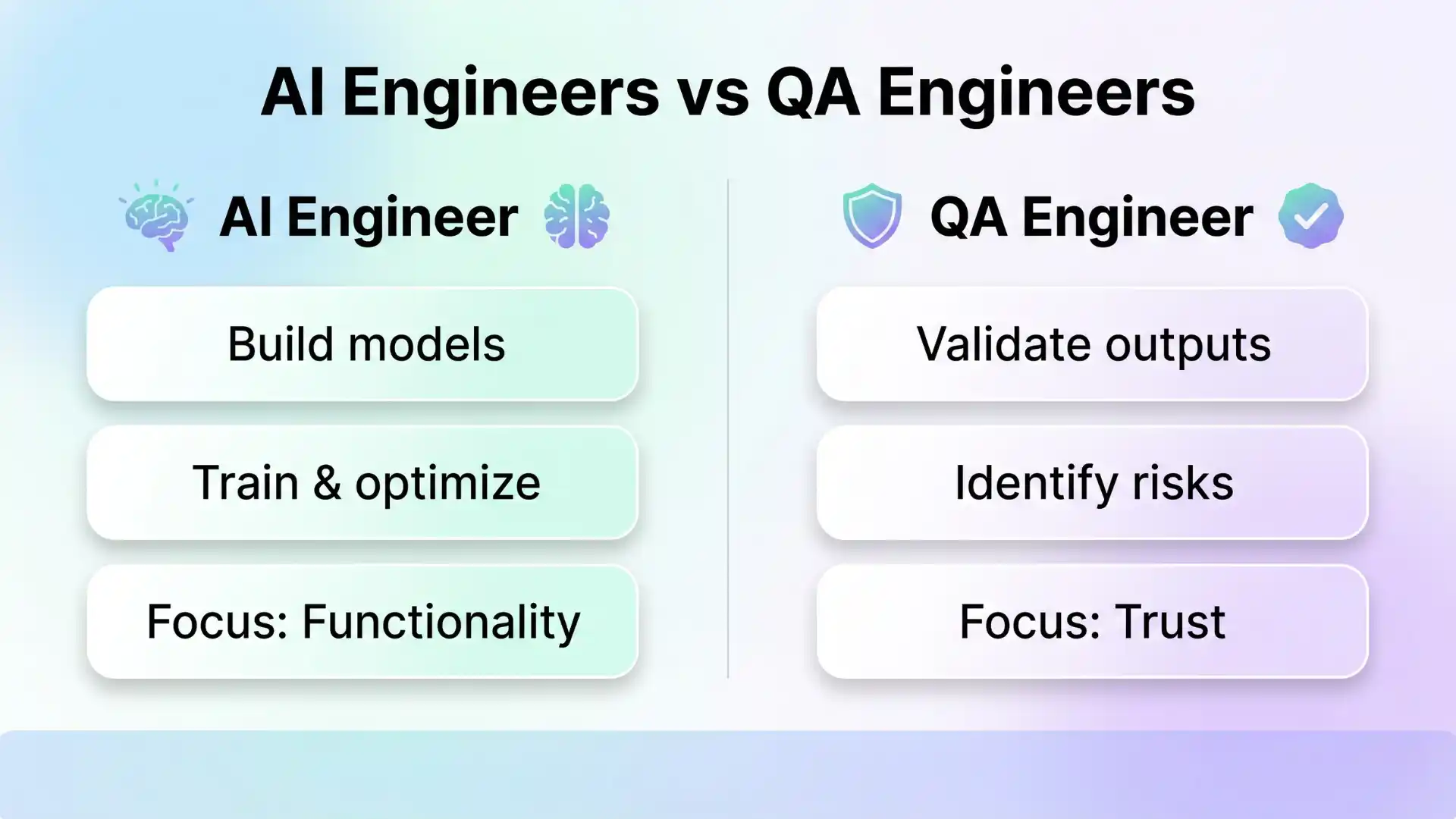

AI Engineers vs QA Engineers

| Role | Focus | Outcome |

|---|---|---|

| AI Engineer | Build & optimize models | Functionality |

| QA Engineer | Test & validate outputs | Trust |

👉 AI engineers make systems work

👉 QA engineers make systems reliable

Real Example

Requirement:

“Generate login test cases using AI”

AI Output:

- Valid login

- Invalid login

QA Engineer Adds:

- Security edge cases

- Multiple session handling

- Brute force attack scenarios

👉 AI for software testing improves speed

👉 QA engineers improve quality

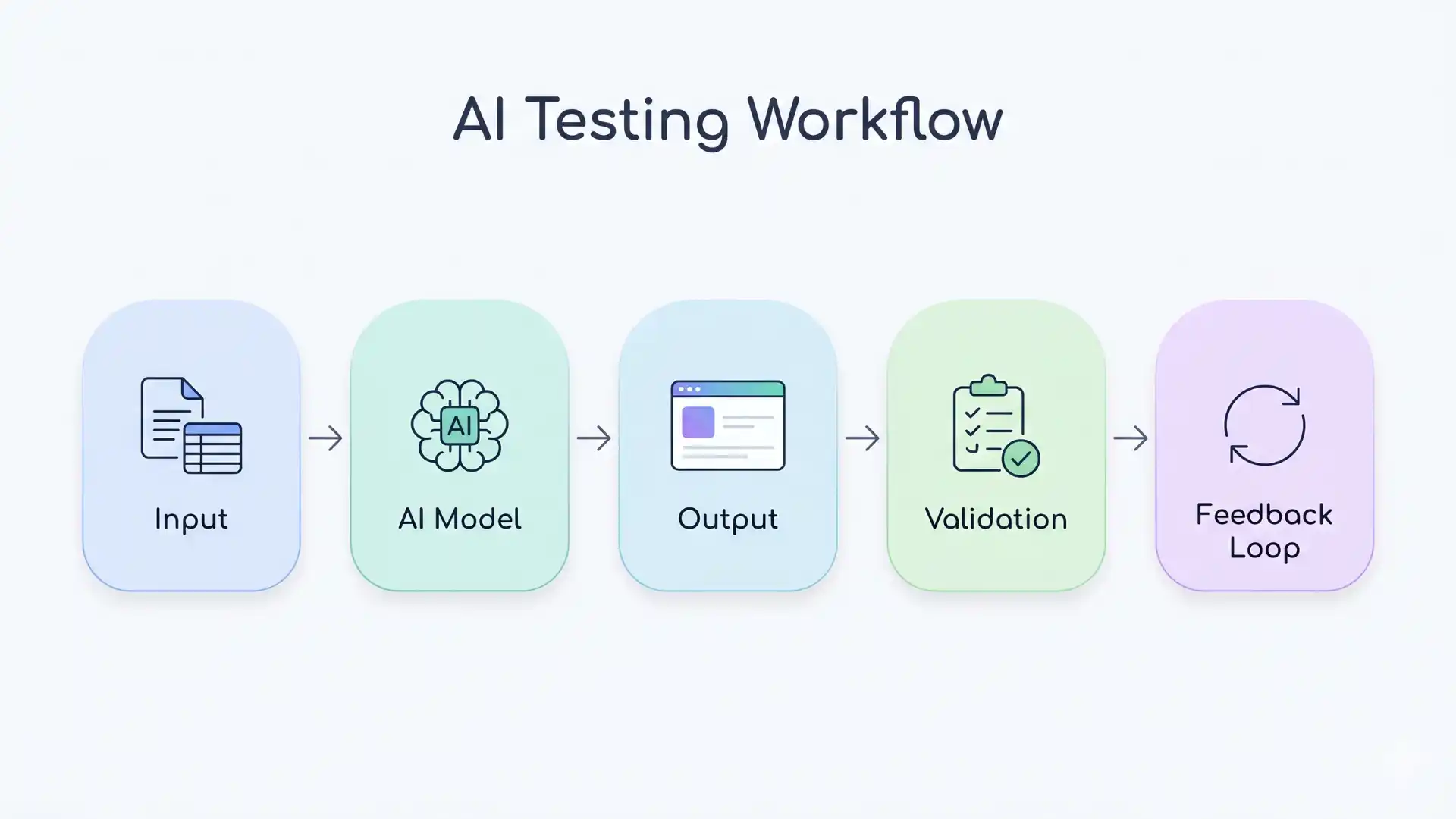

The Real AI Testing Workflow

Modern systems follow this loop:

Input → Model → Output → Validation → Feedback

- AI engineers build the system

- QA engineers validate the output

- Feedback improves the system

👉 This is the foundation of AI in testing

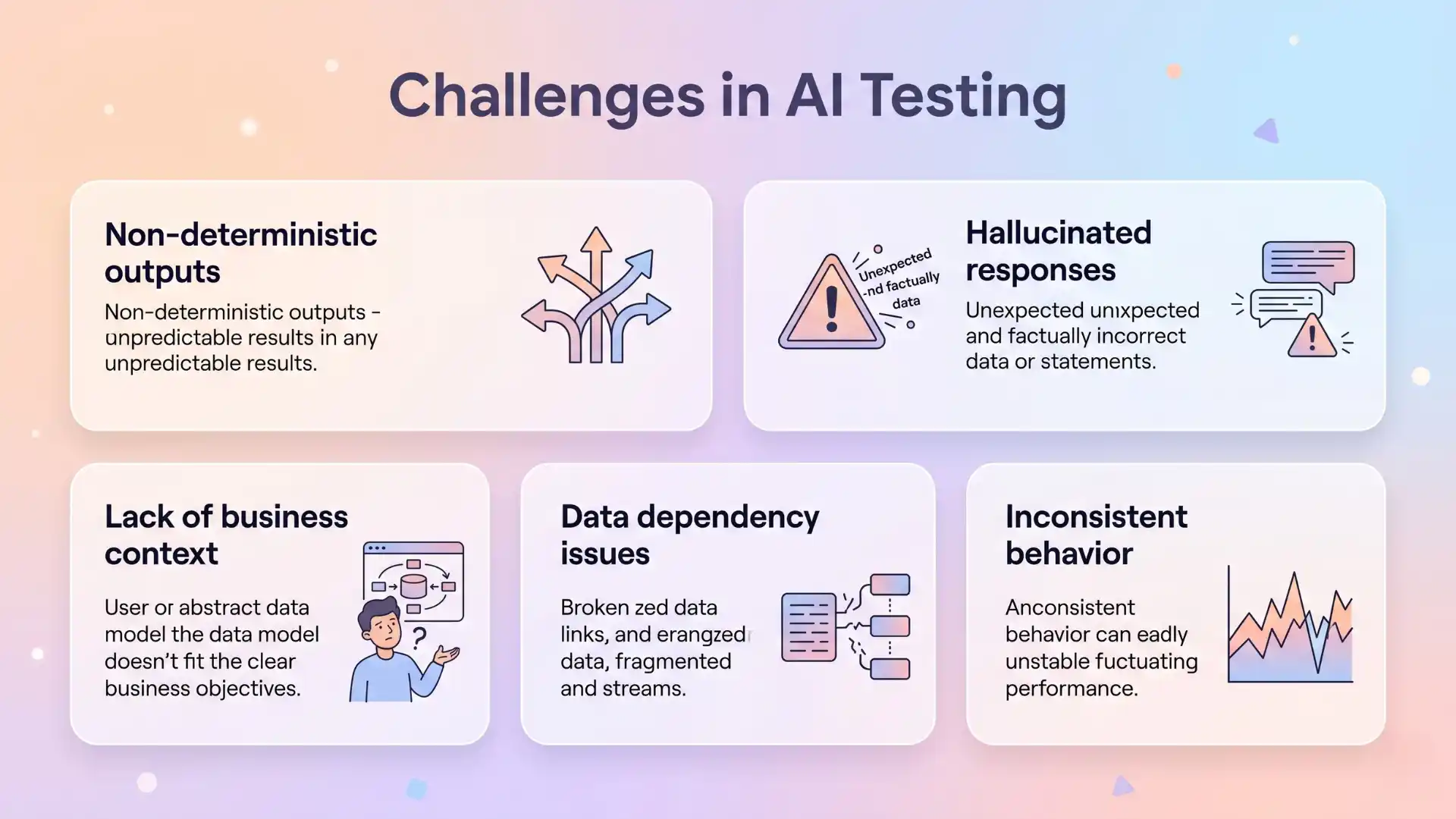

Challenges in AI Testing

AI introduces new testing challenges:

- Non-deterministic outputs

- Lack of business context

- Hallucinated responses

- Data dependency issues

- Inconsistent behavior

👉 This is why testing AI systems is different from traditional testing

Without proper validation:

- Errors go unnoticed

- Risk increases

- Trust decreases

The New Role of QA Engineers in AI Era

QA engineers are evolving into:

- AI Output Validators

- Risk Analysts

- Workflow Testers

- Quality Owners

They ensure:

- Accuracy

- Consistency

- Reliability

👉 This is the future of AI in software testing

What Happens Without QA?

Without QA validation:

- AI outputs remain unchecked

- Errors scale faster

- Systems fail silently

- Trust collapses

👉 The system works—but cannot be trusted

And that is more dangerous than failure.

Can AI engineers alone make AI systems reliable?

No. Reliability requires continuous validation, which is the role of QA engineers.

Is AI replacing QA engineers?

No. AI increases the need for QA by introducing new testing challenges and risks.

Why This Matters Now

AI adoption is growing across industries.

As AI grows:

- Complexity increases

- Risk increases

- Need for validation increases

👉 This makes AI in software testing more important than ever

Final Thought

AI engineers build intelligence.

QA engineers build trust.

And in the real world:

👉 Trust matters more than intelligence

Because a system that works but cannot be trusted

is more dangerous than one that fails visibly.

Key Takeaways

- AI systems are not reliable by default

- QA engineers ensure trust through validation

- AI for software testing improves speed, not accuracy

- Testing AI systems requires new strategies

- Trust is the foundation of modern AI systems

FAQs

Who makes AI systems trustworthy?

AI engineers build AI systems, but QA engineers ensure trust by validating outputs, identifying risks, and performing AI system validation in real-world scenarios.

Why are AI systems not reliable by default?

AI systems produce probabilistic outputs and can generate incorrect results that appear correct, making validation essential in AI testing.

What is AI system validation in software testing?

AI system validation is the process of testing AI outputs for accuracy, consistency, reliability, and real-world behavior using structured testing approaches.

How is AI in software testing different from traditional testing?

AI in software testing focuses on validating variable outputs and probabilistic behavior, while traditional testing checks fixed and predictable outputs.

What role do QA engineers play in AI testing?

QA engineers validate AI outputs, identify edge cases, analyze risks, and ensure AI systems behave correctly under real-world conditions.

Can AI replace QA engineers in software testing?

No. AI supports testing tasks but cannot replace human judgment, domain knowledge, and risk analysis required for reliable testing.

What are the biggest challenges in AI testing?

Key challenges include non-deterministic outputs, hallucinated responses, lack of business context, and data dependency issues.

How does AI for software testing help QA engineers?

AI for software testing helps generate test cases, detect patterns, and improve workflows, but still requires human validation for accuracy.

We Also Provide Training In:

- Advanced Selenium Training

- Playwright Training

- Gen AI Training

- AWS Training

- REST API Training

- Full Stack Training

- Appium Training

- DevOps Training

- JMeter Performance Training

Author’s Bio:

Content Writer at Testleaf, specializing in SEO-driven content for test automation, software development, and cybersecurity. I turn complex technical topics into clear, engaging stories that educate, inspire, and drive digital transformation.

Ezhirkadhir Raja

Content Writer – Testleaf