What are real AI use cases in software testing?

Real AI use cases in software testing include predictive defect analytics, risk-based test prioritization, self-healing automation, synthetic data generation, and AI-driven test design.

Software testing is not becoming more complex.

Software itself is.

Microservices, AI-native applications, continuous deployment, regulatory constraints, distributed systems — the modern software ecosystem is fundamentally different from what traditional automation was designed to handle.

For years, automation improved speed.

Now, intelligence must improve resilience.

According to the World Quality Report (Capgemini & Sogeti), organizations increasingly view AI-driven quality engineering as a strategic investment rather than an experimental initiative. Meanwhile, McKinsey’s State of AI report highlights rapid AI adoption across IT and engineering functions, particularly in environments with high release velocity.

This signals something important:

AI in software testing is not a trend.

It is becoming infrastructure.

But most content on this topic is shallow — listing tools or repeating buzzwords.

Let’s go deeper.

This article explores the AI use cases in software testing that will still matter a decade from now — because they are structural, not fashionable.

Key Takeaways

-

AI shifts testing from execution to intelligence

-

Predictive testing improves release confidence

-

Self-healing reduces maintenance overhead

-

Synthetic data enables compliance and scalability

-

AI testing will expand to testing AI systems

Who Should Read This?”

-

QA Engineers exploring AI in software testing

-

Automation testers moving beyond scripts

-

DevOps teams optimizing CI/CD pipelines

-

Tech leaders building intelligent QA systems

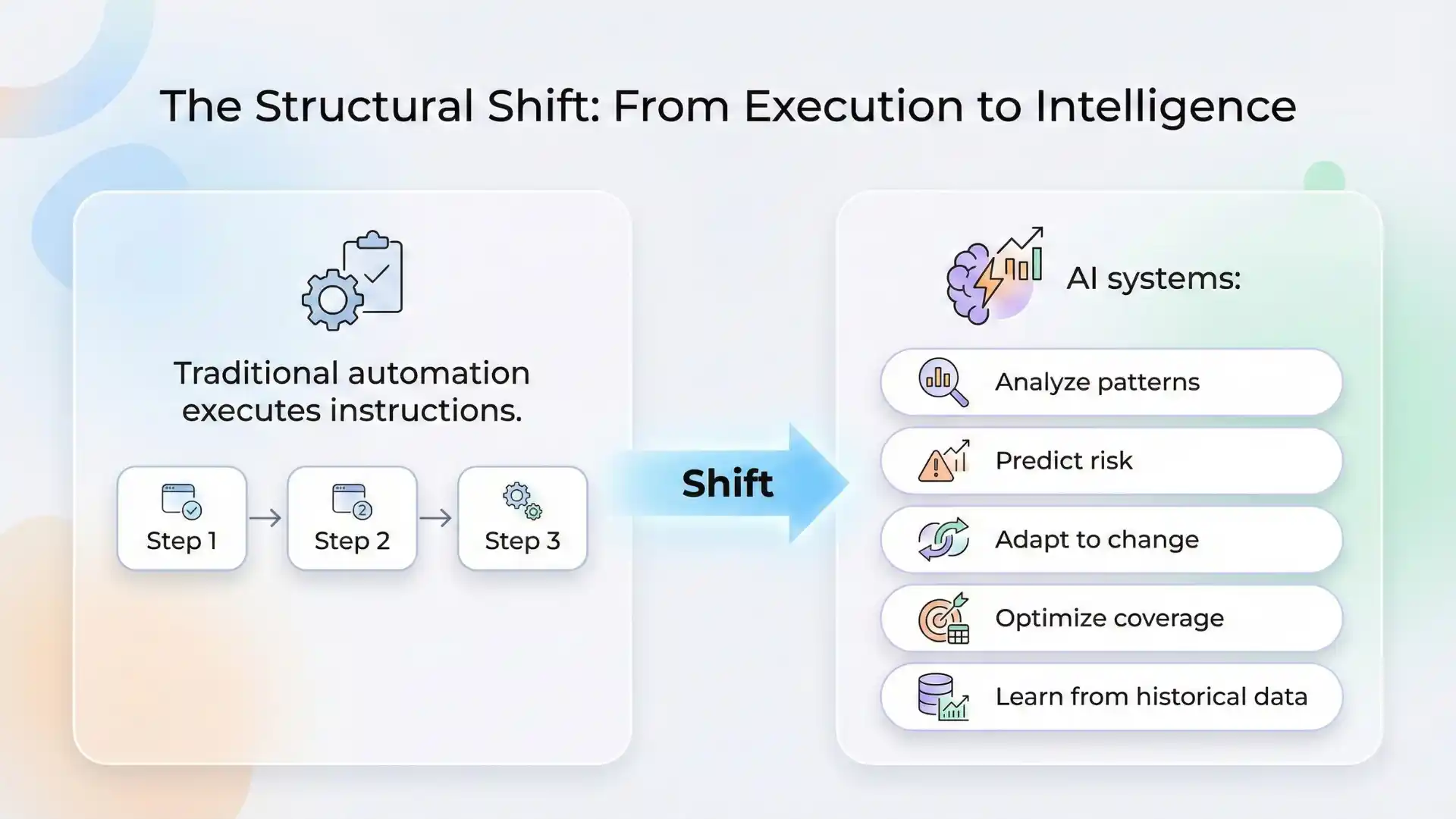

The Structural Shift: From Execution to Intelligence

Traditional automation executes instructions.

AI systems:

- Analyze patterns

- Predict risk

- Adapt to change

- Optimize coverage

- Learn from historical data

This is not just faster testing.

It is systemic intelligence layered into quality engineering.

If we want AI use cases that last 10 years, they must solve structural problems — not temporary ones.

AI-Driven Testing vs Traditional Automation

| Aspect | Traditional Automation | AI-Driven Testing |

|---|---|---|

| Execution | Script-based | Intelligent & adaptive |

| Test Design | Manual | AI-generated |

| Maintenance | High | Self-healing |

| Risk Detection | Reactive | Predictive |

| Decision Making | Manual | Data-driven |

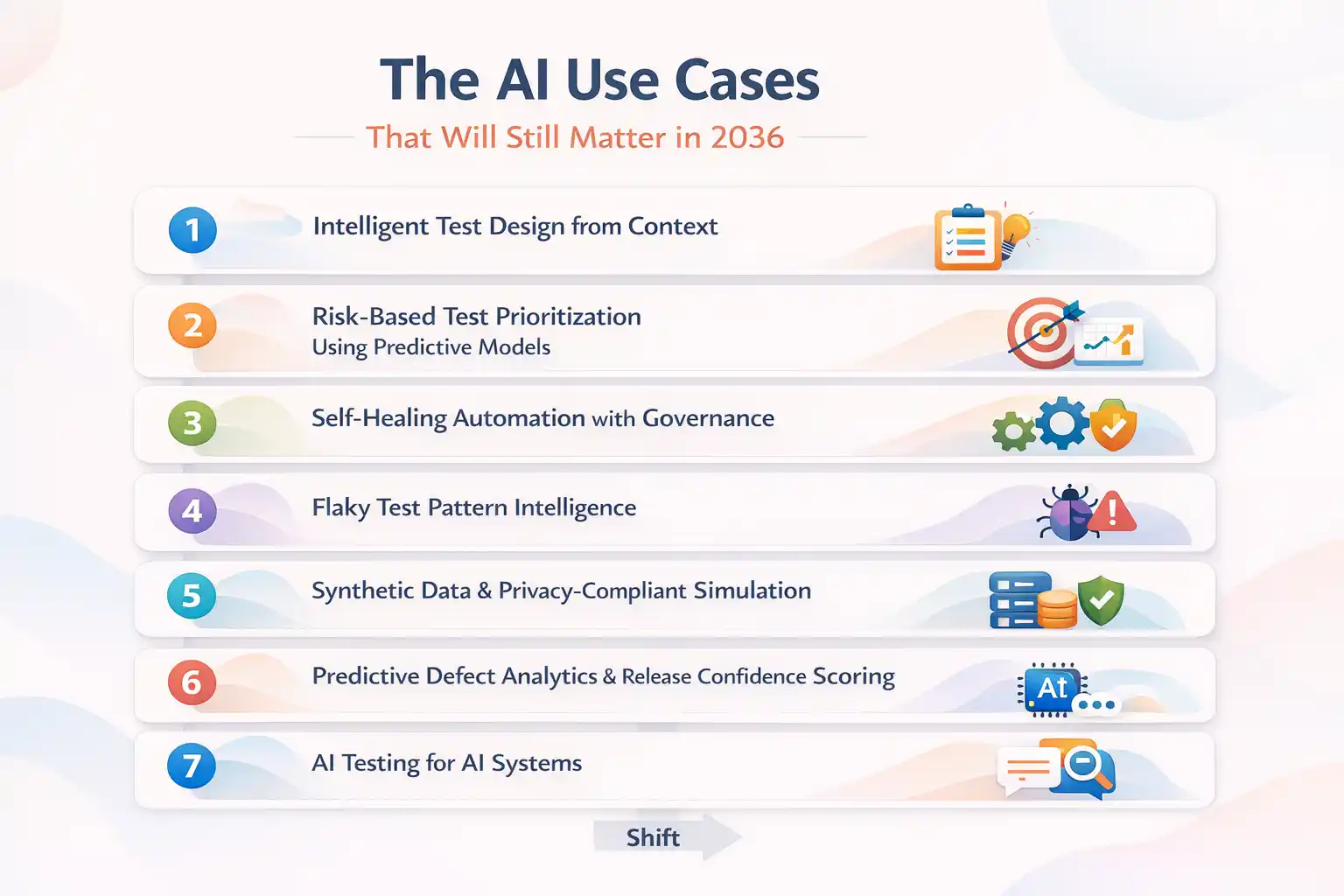

The AI Use Cases That Will Still Matter in 2036

Instead of random examples, let’s group them by long-term impact.

1. Intelligent Test Design from Context

Generative models can now:

- Convert requirements into structured test cases

- Derive negative scenarios from business rules

- Generate API test payloads from OpenAPI specifications

- Suggest edge cases based on production logs

But the real value is not speed.

It is contextual coverage intelligence.

As systems grow more distributed and dynamic, manual test design cannot scale linearly. AI-driven contextual generation will become a standard layer in QA workflows.

Ten years from now, this won’t be optional.

2. Risk-Based Test Prioritization Using Predictive Models

Regression suites are growing exponentially.

AI models trained on:

- Code changes

- Historical defect density

- Commit frequency

- Module complexity

Can prioritize high-risk tests dynamically.

This moves testing from equal distribution to probability-based optimization.

In high-frequency CI/CD environments, risk-based prioritization will become the norm — not an advanced practice.

3. Self-Healing Automation with Governance

UI changes break scripts.

AI-based self-healing systems can:

- Detect DOM shifts

- Suggest alternative locators

- Adapt selectors dynamically

- Learn patterns over time

However, long-term sustainability requires audit controls. Self-healing without governance introduces hidden risk.

The next decade will see smarter hybrid systems — adaptive but traceable.

This is maintenance transformation at scale.

4. Flaky Test Pattern Intelligence

Flaky tests damage trust more than failing tests.

AI clustering models can:

- Detect intermittent instability

- Classify environmental vs product defects

- Identify timing anomalies

- Suggest stability improvements

Over time, QA dashboards will shift from “pass/fail” metrics to confidence scoring systems.

Testing maturity will be measured by signal clarity — not volume.

5. Synthetic Data & Privacy-Compliant Simulation

Data privacy regulations are tightening globally.

AI-generated synthetic data enables:

- Realistic domain simulation

- Edge case amplification

- Anonymized production-like scenarios

- Large-scale scenario expansion

As compliance requirements increase, synthetic data generation will become a strategic necessity.

Not for convenience — for legal sustainability.

6. Predictive Defect Analytics & Release Confidence Scoring

Instead of asking:

“Did all tests pass?”

Future organizations will ask:

“What is the release confidence probability?”

AI systems trained on historical patterns can estimate:

- Defect leakage probability

- Regression likelihood

- High-risk modules

This is a shift from binary validation to probabilistic quality assessment.

In a decade, confidence scoring may become standard in release governance.

Recommended for You: Automation testing interview questions

7. AI Testing for AI Systems

As applications embed machine learning models, QA must evolve.

Testing AI systems includes:

- Bias detection

- Fairness validation

- Model drift monitoring

- Adversarial robustness checks

- Data integrity assurance

This category will expand significantly over the next 10 years.

Software testing will increasingly include validating non-deterministic systems.

That requires new frameworks, not just improved automation.

8. Conversational Debugging & Intelligent Triage

Large language models already assist in:

- Log summarization

- Stack trace explanation

- Root cause suggestion

- Build failure analysis

Over the next decade, conversational debugging layers will integrate directly into CI pipelines.

Engineers will move from manually parsing logs to interacting with intelligent diagnostic systems.

Time-to-understanding will shrink dramatically.

What Will Not Change in 10 Years

AI will not replace:

- Ethical release decisions

- Domain judgment

- Business risk trade-offs

- Exploratory creativity

- Accountability

The strongest QA teams will operate as hybrid intelligence systems.

AI handles pattern detection and optimization.

Humans handle context, consequence, and judgment.

This balance is not temporary.

It is foundational.

Other Helpful Articles: Java Selenium interview questions and answers

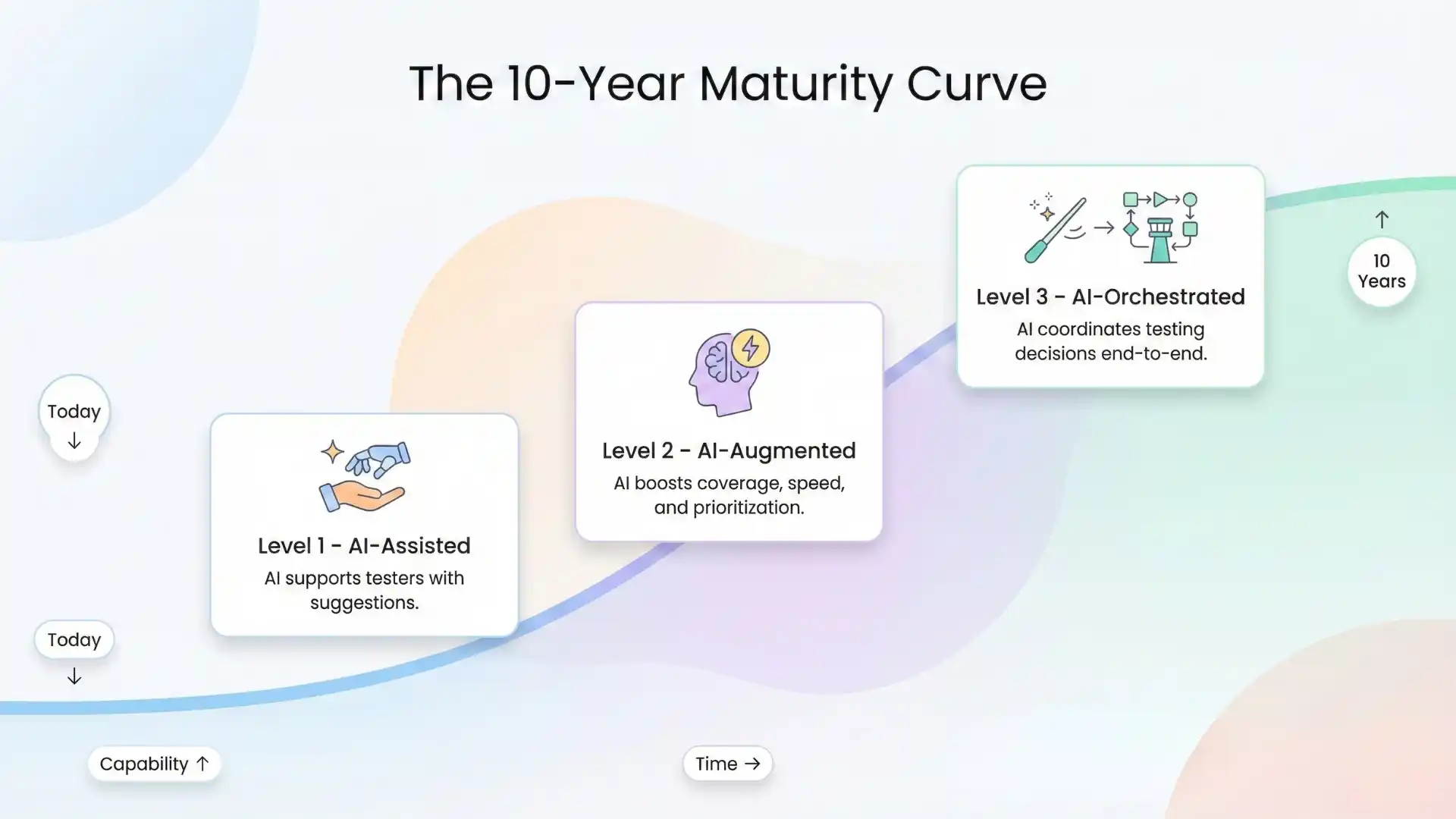

The 10-Year Maturity Curve

Organizations adopting AI in testing today fall into three levels:

Level 1 – AI-Assisted

Documentation, basic generation, simple analytics.

Level 2 – AI-Augmented

Predictive prioritization, defect forecasting, synthetic data, advanced dashboards.

Level 3 – AI-Orchestrated

Autonomous test adaptation, release confidence scoring, dynamic pipeline decisions.

Most enterprises today are transitioning between Level 1 and Level 2.

The next decade will determine who moves toward orchestration and who remains tool-driven.

The Bigger Insight

The next 10 years of software testing will not be about writing more scripts.

They will be about designing intelligent validation systems.

Quality engineering will become:

- Data-informed

- Probability-aware

- Risk-optimized

- AI-governed

Engineers who understand both automation and AI literacy will lead this transition.

At Testleaf, we believe the future QA engineer is not just an automation specialist — but a systems thinker who understands how intelligence integrates into validation strategy.

Because quality is no longer about verifying output.

It is about managing complexity.

And complexity requires intelligence.

Final Thought

AI in software testing is not a shortcut.

It is a structural evolution.

The teams that treat it as hype will constantly chase tools.

The teams that treat it as infrastructure will build resilience that lasts a decade.

The future of testing is not just automated.

It is intelligent.

FAQs

1. What are the most important AI use cases in software testing?

Key use cases include predictive testing, self-healing automation, synthetic data generation, and intelligent test prioritization.

2. How does AI improve software testing?

AI improves testing by predicting risks, optimizing test coverage, reducing flakiness, and accelerating CI/CD pipelines.

3. Will AI replace QA engineers?

No. AI augments QA engineers by automating repetitive tasks while humans focus on strategy and validation.

4. What is predictive testing in QA?

Predictive testing uses historical data and AI models to identify high-risk areas and prioritize testing efforts.

5. Why is synthetic data important in testing?

Synthetic data enables privacy-compliant testing while simulating real-world scenarios at scale.

We Also Provide Training In:

- Advanced Selenium Training

- Playwright Training

- Gen AI Training

- AWS Training

- REST API Training

- Full Stack Training

- Appium Training

- DevOps Training

- JMeter Performance Training

Author’s Bio:

Content Writer at Testleaf, specializing in SEO-driven content for test automation, software development, and cybersecurity. I turn complex technical topics into clear, engaging stories that educate, inspire, and drive digital transformation.

Ezhirkadhir Raja

Content Writer – Testleaf