For years, QA teams treated AI as a useful add-on.

A faster way to draft test cases.

A smarter way to summarize bugs.

A better assistant for automation.

That phase is over.

In 2026, AI is no longer a side tool in software testing. It is becoming part of the operating model of modern quality engineering. The shift is not happening because AI is trendy. It is happening because release cycles are faster, systems are more complex, and teams are being asked to improve quality without slowing delivery. Industry research now frames generative AI and agentic technologies as forces actively reshaping how software is built, tested, and trusted.

The real question for QA leaders is no longer, “Should we use AI in testing?”

It is, “Where should AI be embedded in the QA workflow, and where must human judgment remain in control?”

Why is AI in software testing no longer optional?

AI in software testing is no longer optional because release cycles are faster, systems are more complex, and QA teams are expected to improve quality without slowing delivery. The biggest value comes when AI is embedded into the workflow, not used as a side experiment.

Key takeaways

- AI in QA is now part of the operating model, not just a helpful add-on.

- The real value of AI comes from redesigning how quality work gets done.

- AI helps most with test design, edge-case expansion, triage, maintenance, and prioritization.

- Human validation still matters for business risk, customer trust, and domain judgment.

- The strongest QA teams combine AI speed with human accountability.

Why AI in QA stopped being optional

Most software teams are no longer testing simple, predictable applications. They are testing fast-moving platforms, API-heavy systems, personalized user journeys, and increasingly, products that include AI features themselves.

That changes everything.

Traditional QA still matters, but traditional QA alone does not scale well against modern delivery pressure. McKinsey’s 2025 State of AI found that organizations creating the most value are not simply adding AI tools; they are redesigning workflows, assigning leadership ownership, and defining where human validation is required. That is the key lesson for testing teams too: AI creates value when it changes how work gets done, not when it is used for isolated experiments.

The World Quality Report 2025–2026 makes the same broader point from a quality-engineering lens. It describes generative AI and agentic technologies as no longer distant concepts, but active forces reshaping how solutions are built, tested, and trusted.

That is why AI in software testing is no longer optional. Not because every team must chase hype, but because quality work itself is being redefined.

Popular Articles: playwright interview questions

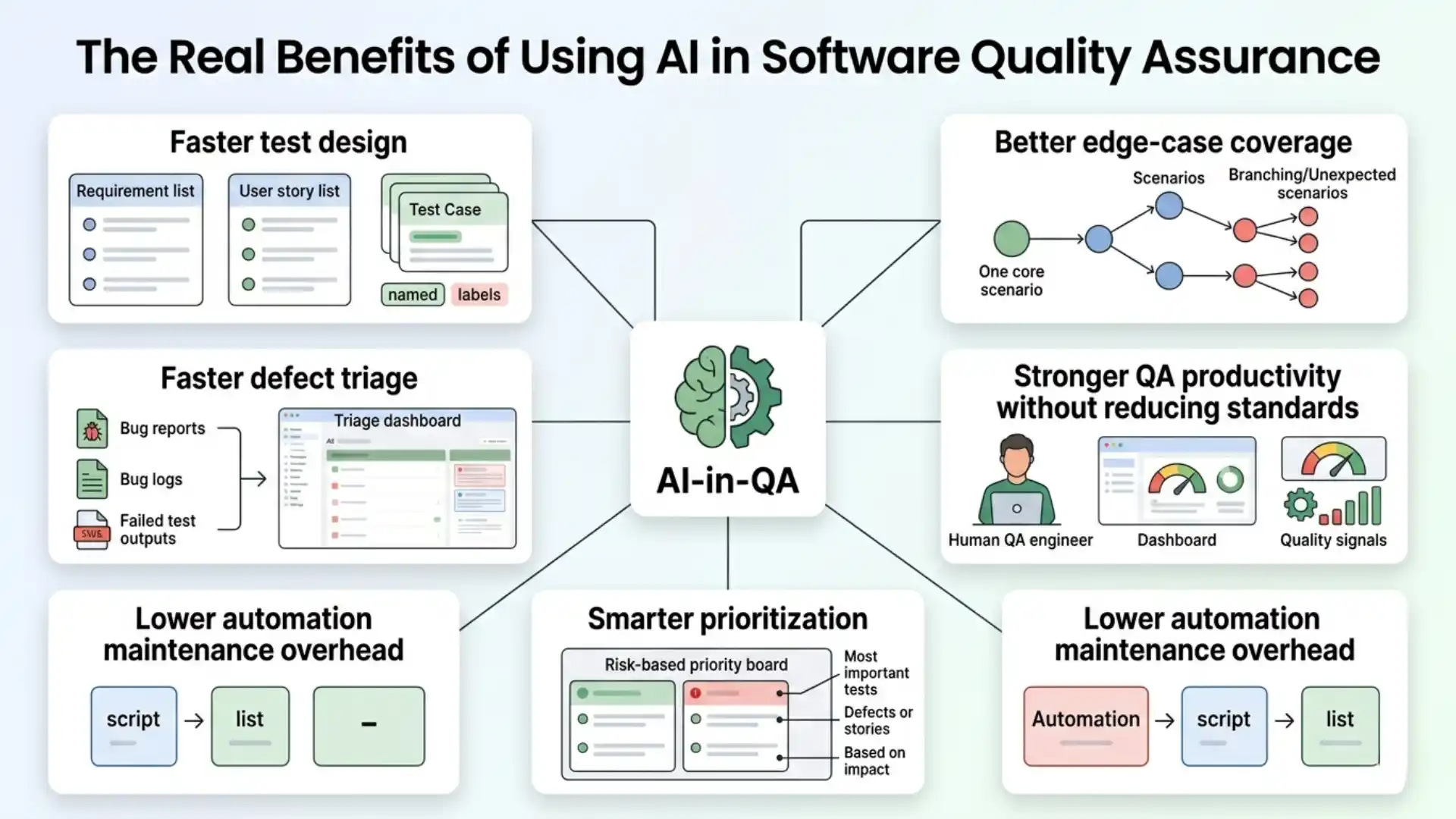

The real benefits of using AI in software quality assurance

The phrase “benefits of AI in QA” often leads to shallow lists. The real benefits are more meaningful when tied to actual testing work.

1. Faster test design

AI can help convert requirements, user stories, and acceptance criteria into first-draft test scenarios far more quickly than manual brainstorming alone. GitHub reported in 2024 that AI coding tools were increasingly being used for test case generation, helping teams create tests faster and improve coverage.

This does not eliminate tester thinking. It gives testers a stronger starting point.

2. Better edge-case coverage

Strong testers already think in boundaries, alternate flows, and failure paths. AI can help expand that thinking by suggesting missing combinations, negative cases, and unusual input conditions that may be overlooked during time pressure. That makes QA more complete, especially in complex regression cycles.

3. Faster defect triage

One of the most practical uses of AI is not generating more tests. It is helping teams understand failures faster. AI can cluster similar failures, summarize logs, identify likely causes, and draft clearer bug reports. That reduces the time between “test failed” and “team understands what happened.”

4. Lower automation maintenance overhead

Automation teams spend too much time fixing brittle tests, updating locators, and reworking scripts after UI changes. AI-assisted maintenance can help identify likely repair paths, suggest updated selectors, and reduce wasted effort in repetitive upkeep. This is one reason AI-first testing conversations are growing across the industry.

5. Smarter prioritization

Not every test deserves equal execution priority in every cycle. AI can help teams rank test focus based on recent code changes, historical failure patterns, or business-critical flows. That supports a more risk-based approach to quality instead of a one-size-fits-all regression mindset.

6. Stronger QA productivity without reducing standards

Used well, AI lets teams spend less time on mechanical work and more time on judgment-heavy work. That is the real productivity gain. Not faster output for its own sake, but better use of tester attention.

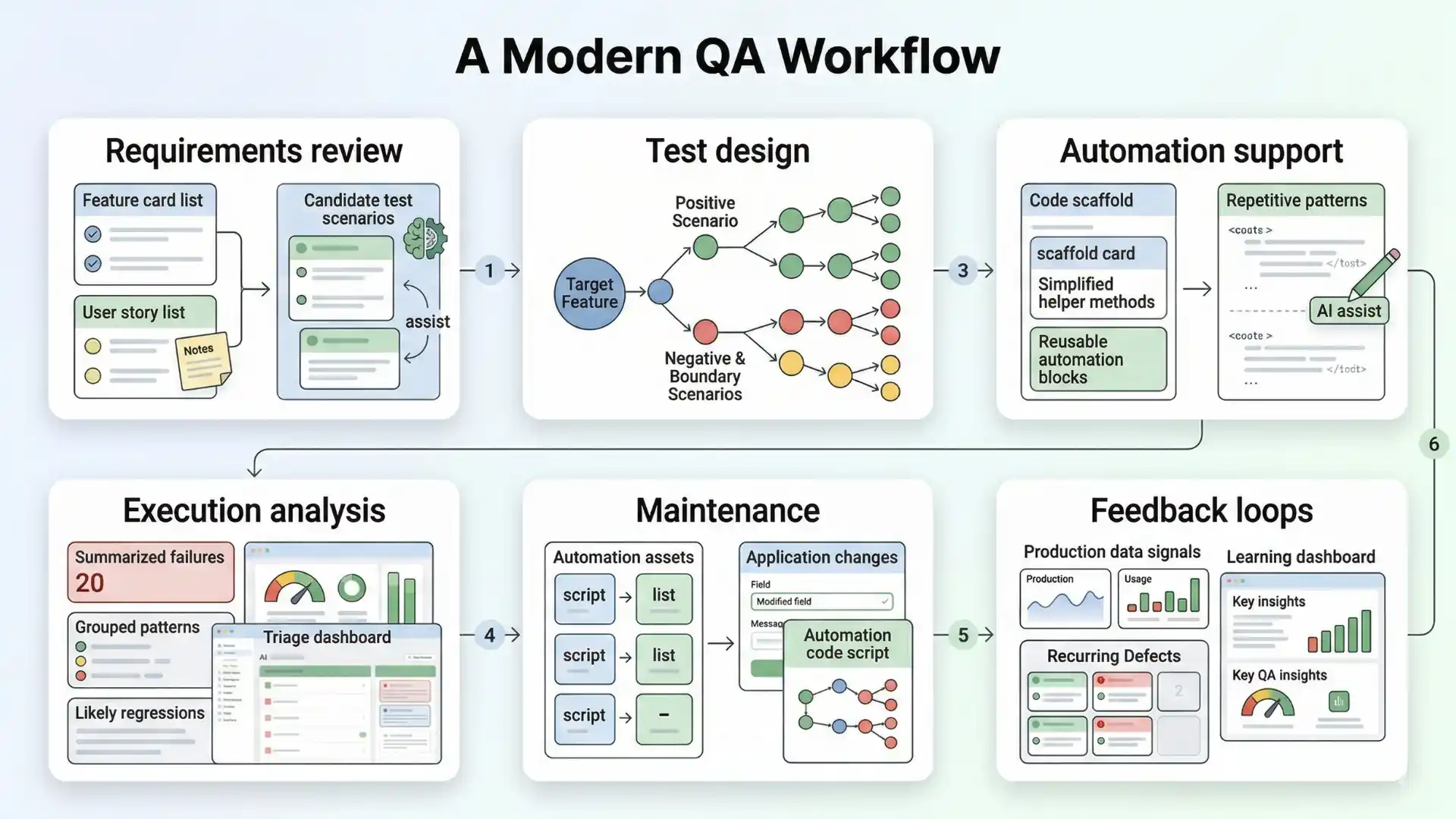

What actually changes in the QA workflow

This is where many articles stop too early.

AI does not merely make existing QA tasks a bit faster. It changes the structure of the workflow itself.

A modern QA workflow increasingly looks like this:

Requirements review: AI helps turn features into candidate test scenarios.

Test design: AI expands positive, negative, and boundary coverage ideas.

Automation support: AI drafts scaffolds, helpers, and repetitive code patterns.

Execution analysis: AI summarizes failures, patterns, and likely regressions.

Maintenance: AI assists with updates when applications evolve.

Feedback loops: AI helps teams learn from production signals and recurring defects.

That shift matters because it moves QA from document-heavy, manually repetitive work toward higher-value quality thinking.

In other words, AI is not replacing software testing. It is compressing low-value effort and increasing the importance of high-value judgment.

Additional Resources: AI and ML engineer salary in india

What AI still cannot replace in software quality assurance

This is where serious QA teams must stay grounded.

AI can generate.

It can summarize.

It can suggest.

It can accelerate.

But it does not truly understand your product the way an experienced tester does.

It does not own business risk.

It does not know which bug would break customer trust.

It does not carry domain context the way a strong QA engineer, QA lead, or product-aware tester can.

That is why human validation remains essential. McKinsey found that organizations getting more value from AI are more likely to define when model outputs require human validation. GitHub’s research also notes that while AI helps with activities like test generation, human review still matters.

So the mature position is not “AI will replace QA.”

The mature position is this:

AI should handle repetition. Humans should own judgment.

What high-performing QA teams are doing differently

The teams adapting best are not using AI randomly.

They are doing three things well.

First, they are embedding AI into workflow stages, not treating it as a novelty tool.

Second, they are keeping humans in the loop for business-critical quality decisions.

Third, they are building new QA capability, including prompt design, API-level thinking, observability, and AI-output validation.

That mirrors broader industry signals. The World Quality Report highlights AI’s transformative potential in quality engineering, while McKinsey shows that value comes more reliably when organizations redesign workflows and formalize governance.

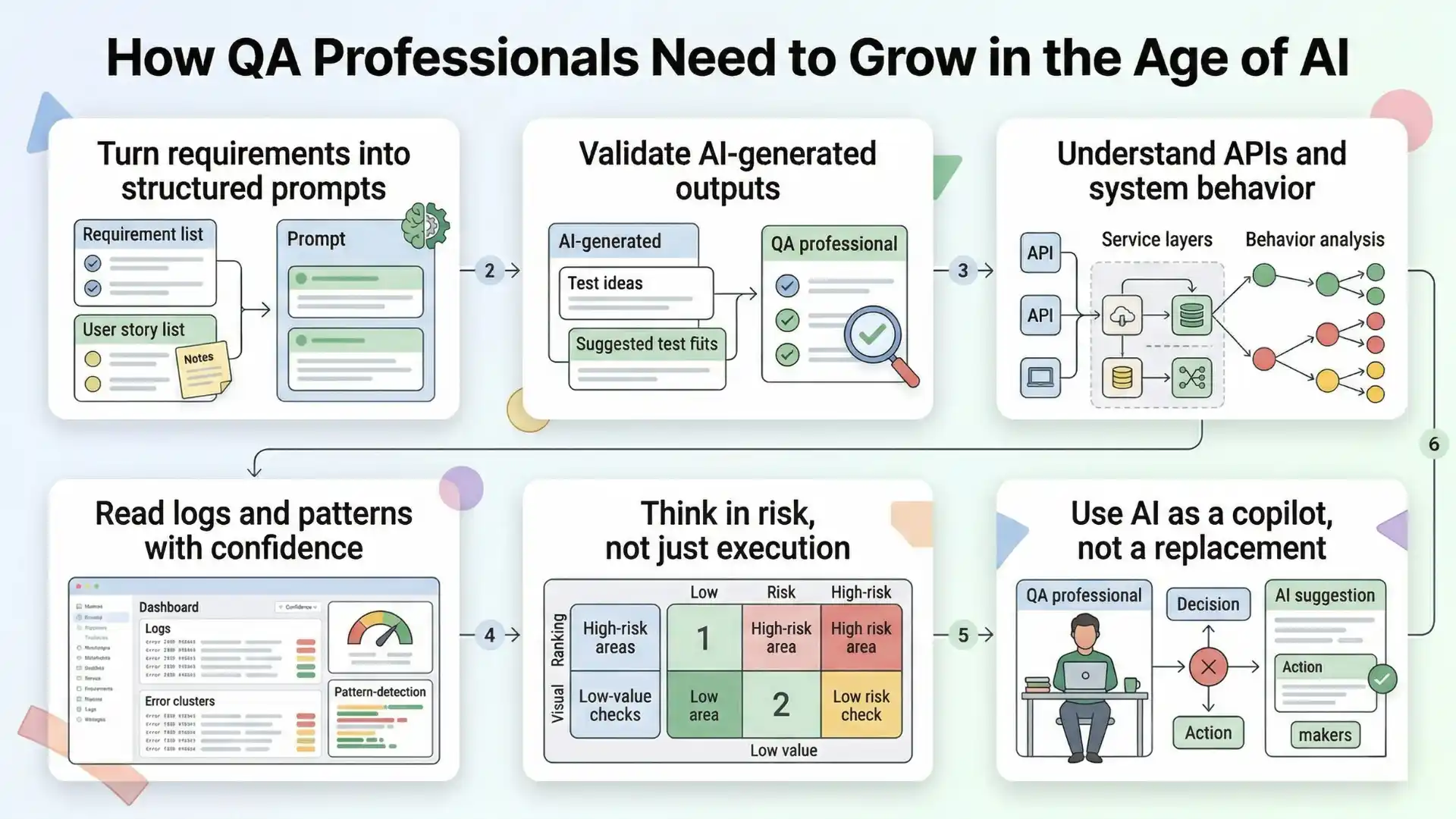

What QA professionals should learn next

If AI is becoming part of the QA operating model, the skill shift is clear.

Testers do not need to become full-time data scientists.

But they do need to become stronger in:

- turning requirements into structured prompts,

- validating AI-generated outputs,

- understanding APIs and system behavior,

- reading logs and patterns with more confidence,

- thinking in risk, not just execution,

- using AI as a copilot without outsourcing accountability.

That aligns with where the market is already moving. testRigor’s 2026 career-prep guidance emphasizes fundamentals, automation, APIs, CI/CD, product thinking, and AI-assisted workflows. At the same time, Stack Overflow’s 2025 survey shows that AI tool usage is high, yet trust is more mixed than before. That combination is important: adoption is real, but blind trust is not.

And that is healthy.

Because strong QA has never been about using tools blindly. It has always been about creating confidence intelligently.

AI in software testing is no longer optional because modern QA teams need help handling faster delivery, growing system complexity, and repetitive testing work, while still keeping human judgment in control of quality risk.

Final thought

AI will not remove the need for software quality assurance.

But it will change which QA teams move faster, see risk earlier, and stay relevant longer.

The winners will not be the teams that automate everything blindly.

They will be the teams that combine AI speed with human judgment.

That is the future of QA.

Not less thinking.

Better thinking, amplified.

If you are in QA today, this is the mindset shift that matters most:

AI is no longer optional in software testing, but human quality leadership is more valuable than ever.

FAQs

Why is AI in software testing no longer optional?

AI is no longer optional because QA teams are working under faster release cycles, greater system complexity, and higher pressure to improve quality without slowing delivery.

What are the real benefits of AI in QA?

The real benefits include faster test design, better edge-case coverage, faster defect triage, lower maintenance overhead, smarter prioritization, and better productivity without lowering standards.

Can AI replace software testers?

No. AI can generate, summarize, suggest, and accelerate, but it does not replace product understanding, business-risk judgment, or customer-trust decisions.

Where does AI fit inside the QA workflow?

AI fits inside requirements review, test design, automation support, execution analysis, maintenance, and feedback loops from production and recurring defects.

What should QA professionals learn next?

QA professionals should get stronger at structured prompting, validating AI output, understanding APIs and system behavior, reading logs and patterns, thinking in risk, and using AI as a copilot without giving away accountability.